I am still recovering from the fairly challenging logistical project of saving a Lucent 5ESS. This is a whale of a project and I am still in a state of disbelief that I have gotten to this point. Thanks to my wife, brother, and a few friends for their help and the University of Arizona which has a very dedicated and professional Information Technology Services staff.

At peak, it served over 20,000 lines. They've done their own writeup, The End of An Era in Telecommunications, that is worth a read. In particular, the machine had an uptime of approximately 35 years including two significant retrofits to newer technology culminating in the current Lucent-dressed 7 R/E configuration that includes an optical packet-switched core called the Communications Module 3 (CM3) or Global Messaging Server 3 (GMS3).

Moving 40 frames of equipment, this required a ton of planning and muscle. The whole package took up two 26' straight-trucks, which is just 1' short of an entire standard US semi-trailer.

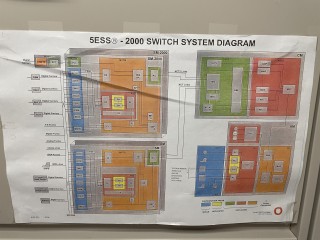

Coming from the computing and data networking world, the construction of the switch was quite bewildering at first. It is physically made up of standard frames which are interconnected into rows not unlike datacenter equipment, but the frames are integrated into an overhead system for cable management. Internally, they are wired up usually within the row and quite a few cables route horizontally beween frames, but some connections have to transit up and over to other rows.

Line Trunk Peripherals hook up to a Switching Module Controller (SMC) directly or an OXU (Optical Cross Connect Unit) which hooks up to an SMC and reduces the amount of copper cabling going between rows. Alarm cables run directly to an OAU (Office Alarm Unit) or form rings in between rows that eventually end at the OAU. Optical connections go from OXUs to SMCs and then to the CM, copper test circuits home run to a Metallic Test Service Unit shelf. Communications Cables come out the top and route toward the wire frame, usually in large 128 wire cables but occasionally in smaller quantity for direct or cross connect of special services. A pair of Power Distribution Frames distribute -48V throughout the entire footprint, taking into account redundancy at every level.

All of this was neatly cable laced with wax string. Moving a single frame required hundreds of distinct actions that vary from quick, like cutting cable lace, to time consuming removal of copper connections and bolts in all directions.

We were able to complete the removal in a single five day workweek, and I was able to unload it to my receiving area in two days over the weekend where it now safely resides.

The next step will be to acquire some AC and DC power distribution equipment, which will have to wait for my funds to recover.

I should be able to boot the Administrative Module (AM), a 3B21D computer, up relatively soon by acquiring a smaller DC rectifier and that alone will be very interesting as it is the only use I know of the DMERT or UNIX-RTR operating system, a fault tolerant micro-kernel realtime UNIX from Bell Labs.

The system came with a full set of manuals and schematics which will help greatly in rewiring and reconfiguring the machine. After the AM is up, I need to "de-grow" the disconnected equipment and I will eventually add back in an assortment of line, packet, and service units so that I can demonstrate POTS as well as ISDN voice and data. In particular, I am looking forward to interoperating with other communication and computing equipment I have.

I will have to reduce the size of the system quite a bit for power and space reasons so will have spare parts to sell or trade.

Additional Pictures are available here until I have a longer term project page established.

This is too much machine for one man, and it is part of a broader project I am working on to build a computing and telecommunications museum. If you are interested in working on the system with me, please feel free to reach out.