A few months after launching Qwen3-VL, Alibaba has released a detailed technical report on the open multimodal model. The data shows the system excels at image-based math tasks and can analyze hours of video footage.

The system handles massive data loads, processing two-hour videos or hundreds of document pages within a 256,000-token context window.

In "needle-in-a-haystack" tests, the flagship 235-billion-parameter model located individual frames in 30-minute videos with 100 percent accuracy. Even in two-hour videos containing roughly one million tokens, accuracy held at 99.5 percent. The test works by inserting a semantically important "needle" frame at random positions in long videos, which the system must then find and analyze.

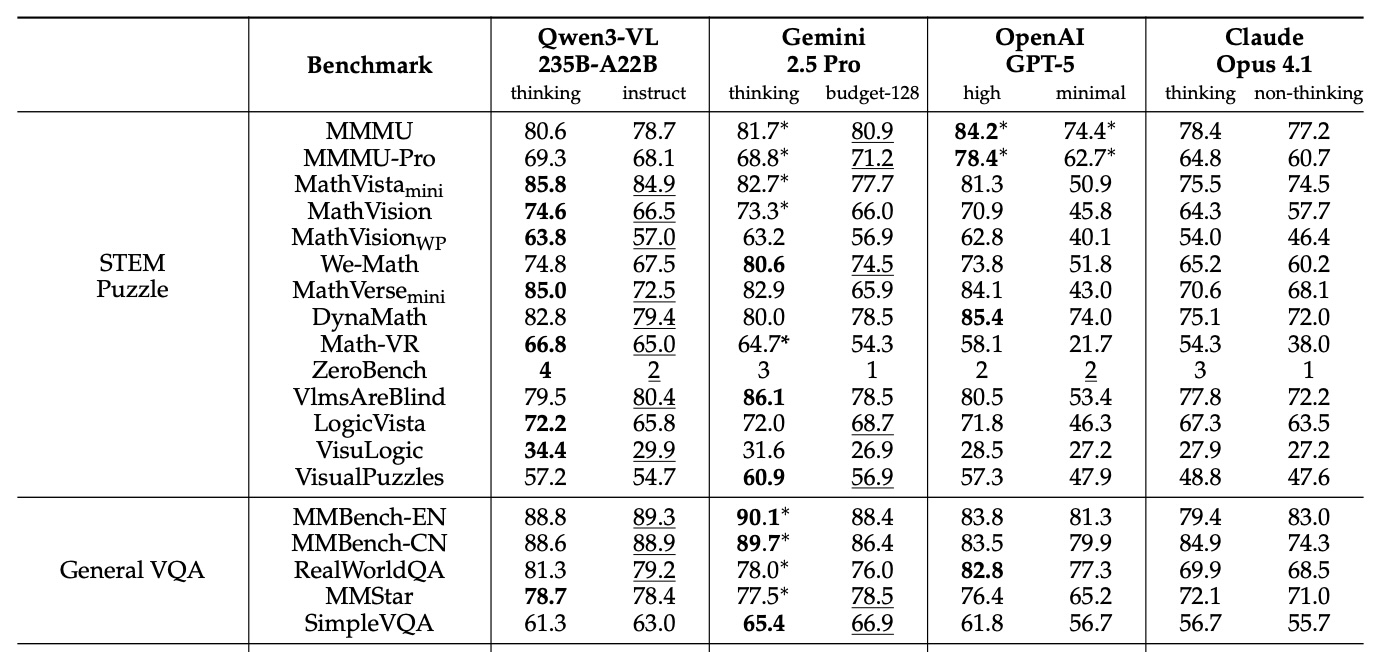

In published benchmarks, the Qwen3-VL-235B-A22B model often beats Gemini 2.5 Pro, OpenAI GPT-5, and Claude Opus 4.1 - even when competitors use reasoning features or high thinking budgets. The model dominates visual math tasks, scoring 85.8 percent on MathVista compared to GPT-5's 81.3 percent. On MathVision, it leads with 74.6 percent, ahead of Gemini 2.5 Pro (73.3 percent) and GPT-5 (65.8 percent).

Ad

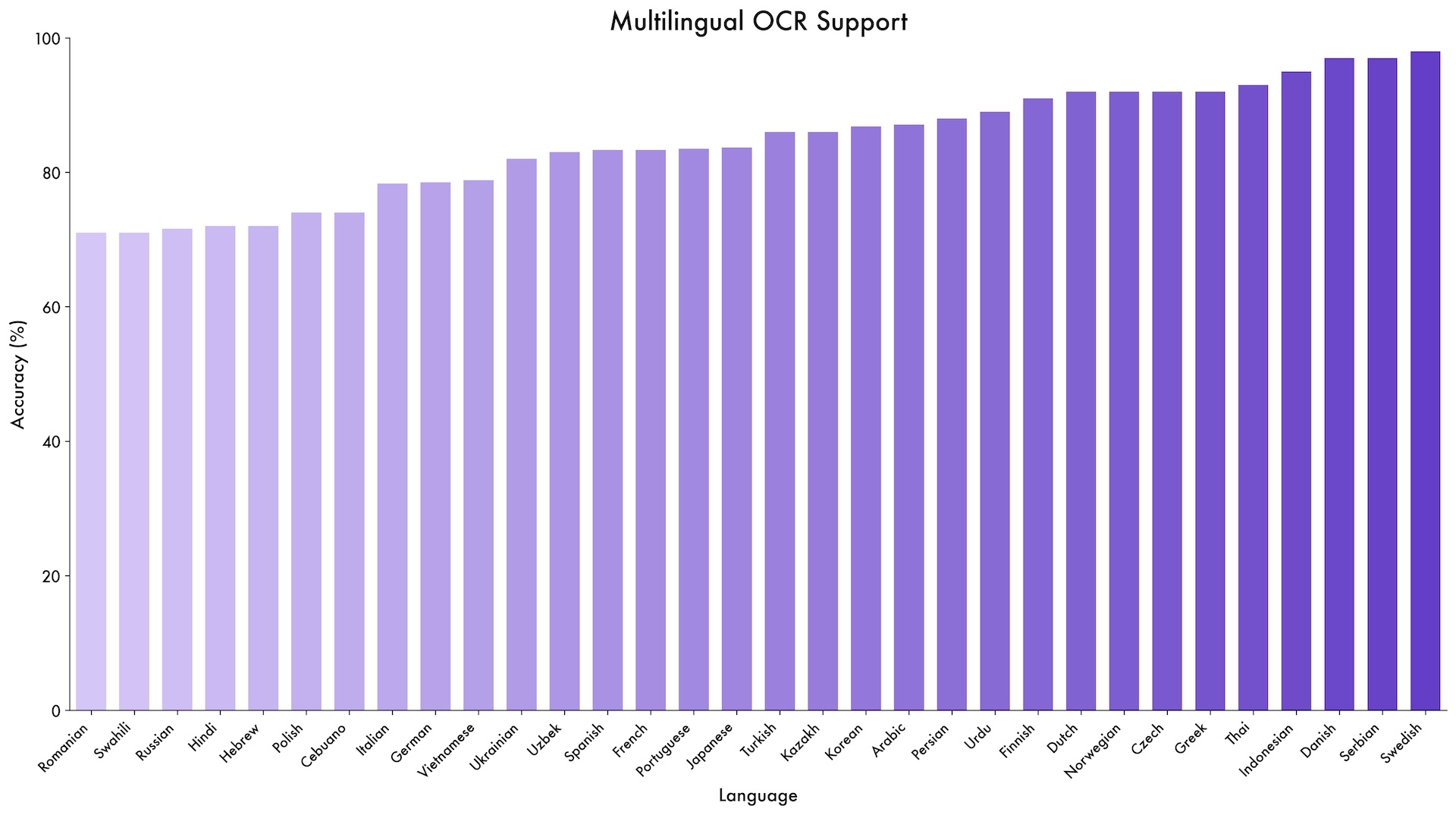

The model also shows range in specialized benchmarks. It scored 96.5 percent on the DocVQA document comprehension test and 875 points on OCRBench, supporting 39 languages - nearly four times as many as its predecessor.

Alibaba claims the system demonstrates new capabilities in GUI agent tasks. It achieved 61.8 percent accuracy on ScreenSpot Pro, which tests navigation in graphical user interfaces. On AndroidWorld, where the system must independently operate Android apps, Qwen3-VL-32B hit 63.7 percent.

The model handles complex, multi-page PDF documents as well. It scored 56.2 percent on MMLongBench-Doc for long document analysis. On the CharXiv benchmark for scientific charts, it reached 90.5 percent on description tasks and 66.2 percent on complex reasoning questions.

It is not a clean sweep, however. In the complex MMMU-Pro test, Qwen3-VL scored 69.3 percent, trailing GPT-5's 78.4 percent. Commercial competitors also generally lead in video QA benchmarks. The data suggests Qwen3-VL is a specialist in visual math and documents, but still lags in general reasoning.

Key technical advances for multimodal AI

The technical report outlines three main architectural upgrades. First, "interleaved MRoPE" replaces the previous position embedding method. Instead of grouping mathematical representations by dimension (time, horizontal, vertical), the new approach distributes them evenly across all available mathematical areas. This change aims to boost performance on long videos.

Recommendation

Second, DeepStack technology allows the model to access intermediate results from the vision encoder, not just the final output. This gives the system access to visual information at different levels of detail.

Third, a text-based timestamp system replaces the complex T-RoPE method found in Qwen2.5-VL. Instead of assigning a mathematical time position to every video frame, the system now inserts simple text markers like "<3.8 seconds>" directly into the input. This simplifies the process and improves the model's grasp of time-based video tasks.

Training at scale with one trillion tokens

Alibaba trained the model in four phases on up to 10,000 GPUs. After learning to link images and text, the system underwent full multimodal training on about one trillion tokens. Data sources included web scrapes, 3 million PDFs from Common Crawl, and over 60 million STEM tasks.

In later phases, the team gradually expanded the context window from 8,000 to 32,000 and finally to 262,000 tokens. The "Thinking" variants received specific chain-of-thought training, allowing them to explicitly map out reasoning steps for better results on complex problems.

Ad

Open weights under Apache 2.0

All Qwen3-VL models released since September are available under the Apache 2.0 license with open weights on Hugging Face. The lineup includes dense variants ranging from 2B to 32B parameters, as well as mixture-of-experts models: the 30B-A3B and the massive 235B-A22B.

While features like extracting frames from long videos aren't new - Google's Gemini 1.5 Pro handled this in early 2024 - Qwen3-VL offers competitive performance in an open package. With the previous Qwen2.5-VL already common in research, the new model is likely to drive further open-source development.