Notes from the End of the Programming Era

The simulacrum is never that which conceals the truth—it is the truth which conceals that there is none. The simulacrum is true.

— Jean Baudrillard

I've been coding since I was 6. I'm 47 now. That's practically my whole life.

For months I had a Commodore 64 that I used to play games. Then one day, my father brought me a computer magazine, K64. It looked boring at first, the kind of thing you'd flip past looking for game screenshots. Until one afternoon, I decided to try that thing called “programming”. This was my first line of code:

POKE 53281, 1Then the screen background became white.

(Well, actually my first line of code was most likely PRINT “HOLA MUNDO”, so the one above might have been my second. Also, if you typed 15 instead of 1, the background becomes light grey, which is like white, except it has seen some things.)

Now, there is a particular feeling that humans get when they discover they can alter the fabric of their reality, even if that reality is a C64 connected to a television set. It is the same feeling, I suspect, that the first person to discover fire had, except they probably didn't immediately try to change the fire's color. It was mind blowing.

And, what was this sorcery?

IF I > 10 THEN POKE 646, 2Are you saying I could define my own rules? I decide what happens when?

I felt like a god on steroids. Which, to be fair, is a redundant thing to say. Gods presumably don't need steroids. But I was six, and the background was white because I told it to be white, and the precise theological implications of the moment were not my primary concern.

Since then, although far too young to understand the scale of my own question, I wondered: how far could this go?

Could one possibly define all the rules of the world in a program? Not just the wall collisions of Pac-Man, or the centripetal force of Pole Position. I meant all of it. The whole thing. Every blade of grass bending under wind. Every ray of light refracting through a glass of water. The table holding the glass being affected by gravity. The gravity being a consequence of mass curving spacetime, which... ok, you get the idea.

So, what would it take?

As programming became an almost all-time hobby in the years that followed, I realised the answer was: a lot of code. An almost inconceivable amount.

But the important thing, the thing that would keep me up some nights, was that it was theoretically possible. It would need a few geniuses with enough time and patience to write every rule, every physical law, and then a computer powerful enough to run all of them simultaneously, in real-time.

Then, a decade and a half later, The Matrix screened on theaters. It hit me. Of course, how didn’t I think about it? Build the machines, then they become the geniuses. We just need to write the code for a machine that is capable of writing the world for us (and not enslave us in the process…).

So, when another couple of decades later, we saw the rise of LLMs with the ability of writing complex algorithms instantly, I thought: alright, we are getting close. That 'inconceivable amount of code'? Isn't a barrier anymore, it's a commodity. Pausing to think through implementation details has become, for many of us, the kind of meticulous craft that people speak of in the same tone they use for calligraphy or blacksmithing: with great respect and absolutely no intention of doing it themselves.

So this is it, I guessed. Now it is just a matter of memory and computing. Then we’ll have machines writing the infinite codebase. Right?

But I was wrong again. Just not about the destination, but about the road.

Because the road is starting to look like it doesn’t involve writing code at all.

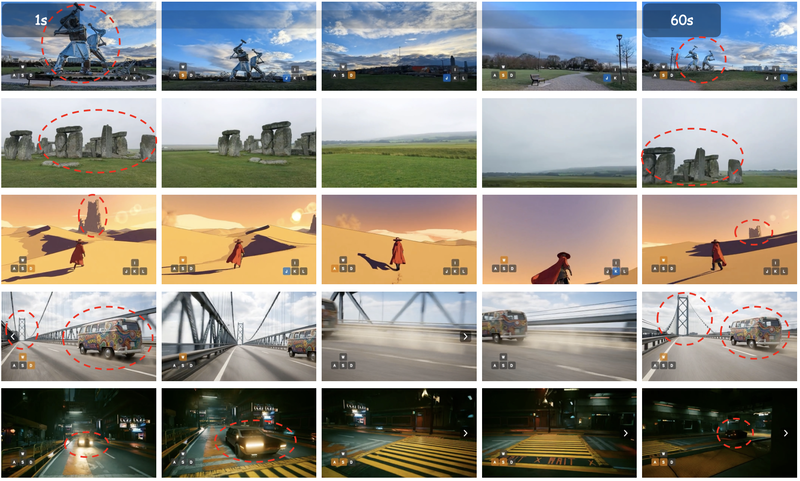

Within the past 6 months, DeepMind announced Genie 3, and Robbyant open-sourced LingBot-World. These are “world models” that generate interactive environments you can navigate in real time and consistently for several minutes. No 3D models involved. No game engine. No code. A world, rendered frame by frame, that you could walk through using keyboard controls.

When you press W to walk forward, the trees get closer and the parallax between foreground and background shifts correctly, without a program calling a hand‑written “move camera forward” routine. Just an action‑conditioned model mapping (history + input) → next frame. The model just knows, from having consumed enough video of people moving through spaces, what should happen.

When you turn left, the world rotates around you in a way that is geometrically plausible, not because anyone computed the geometry, but because the model has seen enough turns to know what turning looks like.

(Somewhere, John Carmack is either furious or fascinated. Possibly both. The man spent 1993 inventing an entirely new way to render 3D spaces so that we could shoot demons in a corridor, and thirty years later a neural network achieves something similar by watching enough YouTube.)

In these models, there is no scene graph with named objects and their positions, there is no code managing the state of the world. However, you can walk forward in a generated world, look at Stonehenge, turn around, walk away for sixty seconds, turn back, and Stonehenge is still there. Structurally intact. In the correct position. The model maintains continuity by conditioning each new frame on the recent stream of frames and actions: memory as behavior, not a hand-written world-state database. Think of it similar to an LLM context window: a rolling history the model attends to while generating the next frame.

So, I want to be very clear about what is happening here. The worlds you’re walking through in Genie or LingBot are not running on bespoke, hand-written lines of code written for that world instance. It’s not a traditional Unreal-style project where a scene graph and a game loop drive everything through explicit engine logic. What you’re experiencing is a model generating the next state, frame by frame, conditioned on your actions and recent history.

And this is interesting, because in the “AI-written code” age you’d expect Cursor or Claude Code to matter. Here they don’t, because there’s no code to write.

Now, here is where I need you to take a deep breath, because I’m going to suggest something that sounds absurd.

Let's think about something less fascinating than fantastic worlds you can walk through like a videogame. Let's think about something nobody has ever called fascinating in the entire history of human civilisation.

Let's think about a spreadsheet.

You see a cell, A1, with the number 10 in it. In cell A2, you type =A1+1. You press Enter. You expect to see 11.

In a traditional spreadsheet, there is a formula parser, an evaluation engine, a dependency graph, all the usual machinery. It computes.

But imagine a model that has seen enough “spreadsheet usage” to predict what happens next. At unthinkable scale. If all you ever do is normal human spreadsheet behavior, it will often look indistinguishable:

you type

=A1+1and an

11appears

Was the answer correct? Yes. Did it run a formula parser and an evaluation engine the way Excel does? No. Just a model producing the next state that looks exactly like arithmetic from the outside.

Once you see this, you cannot unsee it. Every system you interact with, whether it’s a visual desktop where dragging a file moves it to a folder, or an API server returning a data payload, is a sequence of states transitioning according to patterns. They are learnable behaviors. Which means they don’t need an explicit, hand-written implementation to look computed. They just need to be predicted well enough to satisfy the contract.

I've written before about how the interfaces between humans and software are collapsing, how APIs and UIs might converge into a single conversational layer. But what I'm describing here is something else entirely. That was about removing the front door. This is about discovering there could be no building behind it.

So, could such a model simulate a spreadsheet well enough to replace the actual Excel software? Or any software? Or, what is software at this point?

Think about how we actually verify software. When we write tests, we mostly don’t inspect the mechanism. We treat the system as a black box: feed it an input, assert an output, maybe assert a side effect. If the tests pass, we don’t prove the implementation is “right,” but we accept that the contract is being honored over the surface area we bothered to check. We even use property testing and mutation testing to shake the box, throwing randomized chaos at it, trying to find where the rules leak.

Now imagine two boxes. One is Excel: a parser, a calc engine, a dependency graph, all the classic machinery. The other is a model that has learned the same input → output → state transition patterns from examples. If both satisfy the same contract, same results, same invariants, same persistence, same edge cases, same performance within tolerances, what exactly is the meaningful difference?

Which means, in principle, that software itself is simulatable. A learned model that maps (history + action) → next observation can imitate any of them, as long as the behavior is learnable and the memory is sufficient.

Which brings me to the funniest proof of concept I’ve ever seen. Remember Jonas Degrave? December 2022, right after ChatGPT went public?

I want you to act as a Linux terminal. I will type commands and you will reply with what the terminal should show. I want you to only reply with the terminal output inside one unique code block, and nothing else. Do not write explanations.

And it complied. It printed a prompt. It answered pwd. It answered ls. It behaved like a terminal session because it had seen enough documentation and logs to know what terminal sessions look like.

There was no Linux kernel running. No real filesystem. It was a performance. And yes: it was brittle. It contradicted itself. It forgot things. It was a dream that occasionally woke up and panicked.

But now combine that idea with what world models are pushing toward: longer horizons, better consistency, explicit action-conditioning, and in some cases external scaffolding that keeps the state coherent.

Then, extend the thought:

A simulated terminal that remembers everything you did, not because it’s literally storing inodes somewhere, but because it has learned that persistent systems are supposed to be persistent, and it keeps itself honest enough that the illusion holds.

(Yes, in practice you’d probably add a thin layer of external memory to keep it stable, but the point remains: you’re not implementing a filesystem so much as enforcing the contract of one.)

Now, I’m aware this may be a trip to Narnia and back. But what keeps me up at night, in a way that rhymes with what kept me up at night in my youth, is that it is theoretically possible. Not sure if in the way I imagine it or not, but at least something that rhymes with it is approaching faster than we expect.

There is no code at the bottom. Perhaps there was never going to be code at the bottom. Perhaps the answer, it turns out, is that nobody writes the Matrix. You just show it enough of the real world, and it figures out the rest on its own.

These days I don't feel like a god anymore, to be honest. I don't even write code much anymore. AI does it for me. It used to be exciting, but now it’s weirdly normal.

Now, although far too old to understand the scale of my own question, I wonder how far could this go.

Nobody wrote the Matrix.

Read the original article

Comments

By lubujackson 2026-03-034:11 Wow. This is the first take on AI that I find really shocking, and it is totally believable.

But worth noting the difference between a system you interact with and a visualization of such system, injected into your eyeball, is the lack of secondary effects (or first effects?). I suppose those can ALSO be faked and maintained, but at some point the illusion is more work than the system that would normally create it.

It seems likely that the fake system would be much more work than the code needed to generate it for most modern uses, but when we are spinning up bespoke code that will be run once, for a single user? The fake output might be more efficient.

I don't think this means this is the future. But it certainly means it could become a preferred approach for certain use cases, when there is no need for reuse.

I love finding ideas like this, ehich feel like true "AI-native" thinking.

By chairmansteve 2026-03-0323:411 reply His spreadsheet example is very telling. The difference in energy requirements between an actual spreadsheet program and an AI spreadsheet simulator would be multiple orders of magnitude (at least 6, probably more). So the spreadsheet simulator makes no sense. I bet you have the same problem with a world simulator. The energy requirement is so huge you will have to just write a program. With the help of an LLM of course.

So I'm not buying his thesis.

By riffonio 2026-03-041:05 Agree if we look at the next few years. However, hardware is also making significant progress. Have you seen Taalas? https://taalas.com/products/

By nanobuilds 2026-03-032:12 This is interesting and the world models predicting the next frame is huge. I'm also wondering on how this would be applicable to neurological proceesses, thoughts etc.

If thoughts become quantifiable and measurable enough would similar models be able to predict the next one? Wondering what the impact of that would be.