A retrospective on Tess.Design, our attempt to make an ethical, artist-friendly AI marketplace. We launched Tess in May 2024 and shut it down in January 2026.

In 2024, we launched Tess.Design, a marketplace of fine-tuned AI image models where artists got paid a 50% royalty every time someone used their style. Less than two years later, we shut it down. This post is a candid account of what we built, what the data showed, and what any entrepreneur should know before trying to build an AI licensing business on top of creative talent.

The Problem We Were Solving

By 2023, AI image generation had become mainstream and deeply controversial. Image generation models like DALL-E and Midjourney trained on billions of scraped images, often without artist consent or compensation. Surveys consistently showed that consumers believed artists deserved payment when AI generated content in their style. Yet no business model existed to make that happen.

Media companies were in a stalemate. Outlets like Rolling Stones and Fortune wanted AI-generated imagery for blogs, thumbnails, websites, and graphics. But their legal teams were nervous about copyright exposure, given the numerous ongoing lawsuits. So the publishers either banned AI tools entirely or turned a blind eye to their use, hoping for the best.

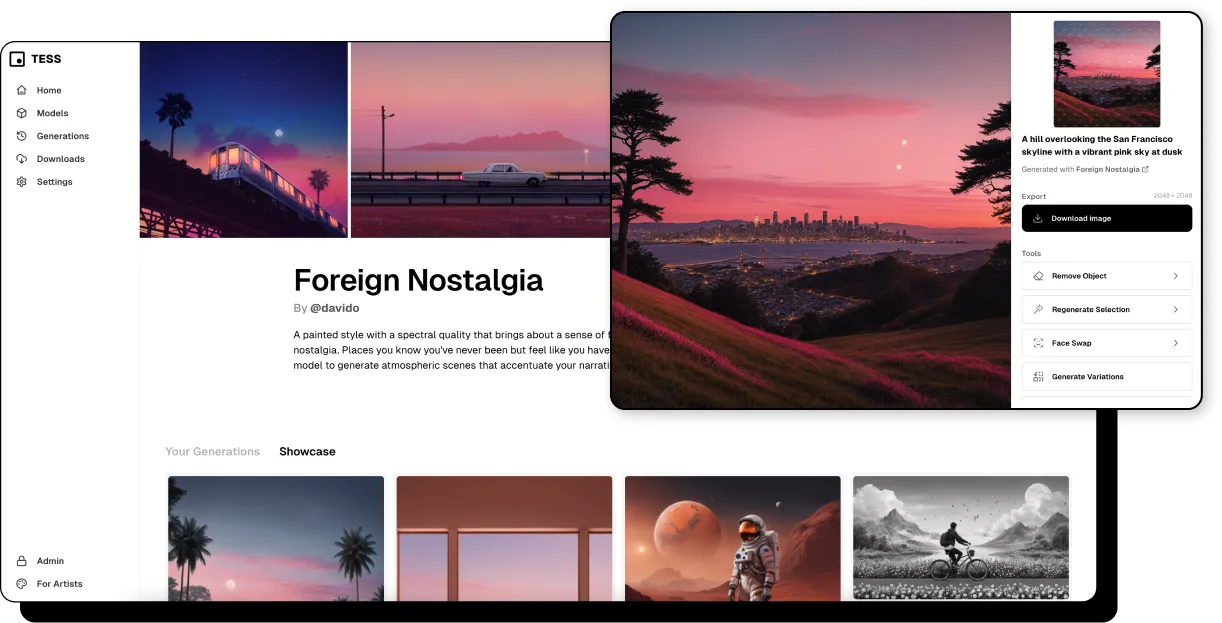

Tess was our answer to this problem: an AI image generation platform where every image was traceable to a single consenting artist, who earned a royalty in its production. In this way, we argued that Tess was the first “properly-licensed” image generator that produced the same quality images as Midjourney and other leading models.

How the Business Model Worked

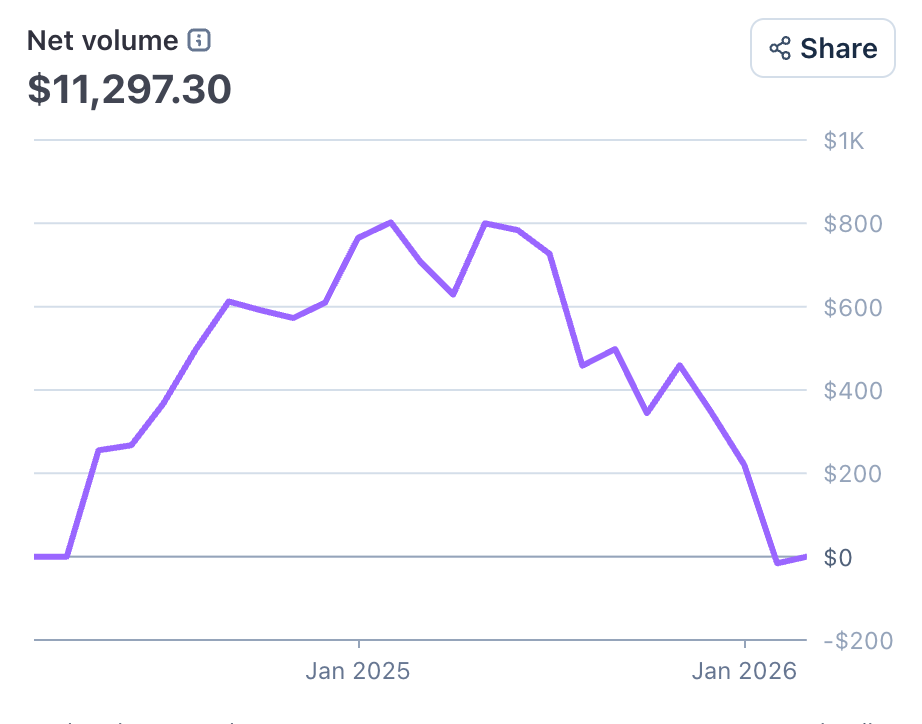

Tess Artists submitted a set of their work to fine-tune a Stable Diffusion base model. The resulting model, linked to that artist's aesthetic, was listed on a public marketplace at tess.design/models. Subscribers paid for access; the artist earned 50% of the revenue when subscribers used their model.

The legal architecture mattered as much as the product. Working with Fenwick, we constructed a contributor agreement and Enterprise Service Agreement built on a novel copyright argument. We posited that because all outputs were stylistically transformed into the contributing artist's aesthetic, the artist held copyright over the derivative works and could license them downstream to customers. This created a clean chain of copyright ownership, something no other AI image generator at the time offered.

To seed the marketplace, we offered 25 founding artists an advance royalty of $300–$4,000 based on their portfolio quality and audience reach. In addition to income, we pitched artists on these value propositions:

- Passive income from royalties on every generation

- A dashboard showing exactly how their model was being used

- A free Tess subscription to use their own model for brainstorming and scaling repetitive work (roughly 1 in 4 artists took advantage of this)

What 325 Cold Emails to Artists Taught Us

In building Tess, we learned a lot from the growth path of Spotify, which took two years to develop its partnerships with record labels. Unlike the music industry, however, illustration and digital art is fragmented. We reached out to 11 illustration agencies about getting their artists on Tess. 0 said yes. So we went artist by artist—sourcing contacts from Instagram, LinkedIn, agency websites, and the bylines of editorial publications we admired.

We reached out to 325 artists over about 6 weeks. The leads were high-end editorial artists who make illustrations for magazines like the New Yorker, take custom commissions, and maintain distinctive styles for studio work.

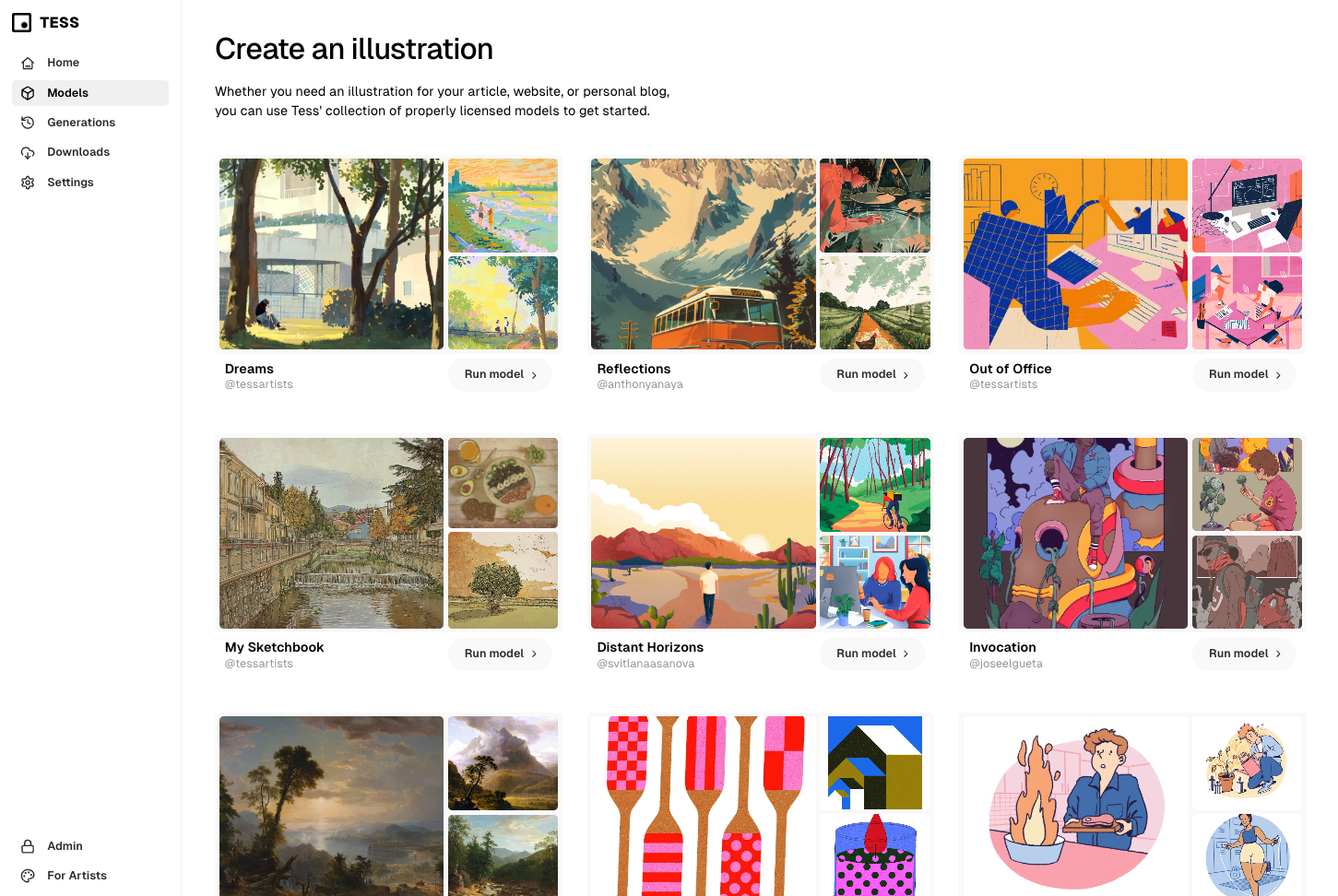

The response rate was striking:

- Almost 50% of the artists we reached out to responded to our cold emails.

- 6.5% of artists that we reached out to agreed to join Tess and had models trained on their work

- 22.4% of artists were hard nos.

- 20.8% of the artists were maybes - took calls, asked questions, but never committed

- 50.2% of artists did not respond to us

For artists who said no, their objections fell into four categories:

1) Ideological opposition to AI: Many artists saw AI as categorically exploitative, regardless of the revenue model.

“There is no such thing as ethical AI, full stop.”

2) Brand dilution. High-end artists worried that enabling anyone to generate in their style would cheapen it.

“ I don’t want it used by a cigarette brand”

3) On principle: Some believed art should be made by hand, regardless of the compensation structure.

“As an artist, I hold certain values regarding the creative process, and I personally feel that AI-generated art goes against those principles. While I acknowledge the technological advancements in this field, I prefer to maintain a hands-on approach to my work”

4) Reputation risk of being associated with generative AI, which the industry generally sees as terrible.The artist community was hostile to AI in 2024. Joining Tess meant risking cancellation. One artist put it plainly: "I've seen how other artists get fried when they say they're interested in AI." Social punishment was a real deterrent.

The artists who said yes were motivated by passive income, curiosity about AI, and a pragmatic desire to offload repetitive commission work—wedding placards, gift tags, wine labels—so they could focus on more meaningful projects.

“I’ve always been passionate about staying at the forefront of technology and embracing the innovations it offers,” one participating artist wrote. “I see AI as increasingly essential in every aspect of our lives, and joining Tess seemed like the ideal way to integrate AI into my profession.

The Numbers

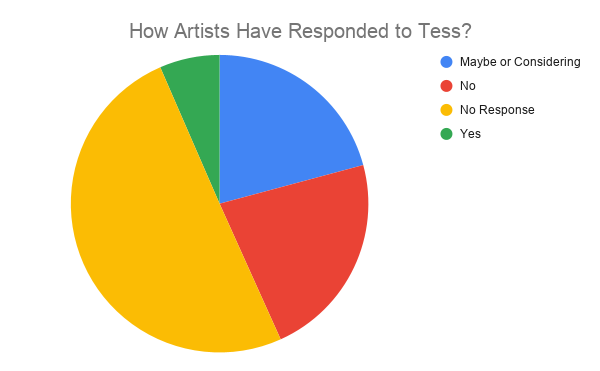

Tess ran for 20 months and generated $12,172.33 in gross revenue. We paid out $18,000 in advanced royalties to artists and spent roughly $100/month on infrastructure (subsidized initially by Azure credits). So, Tess was a net loss: approximately $7,000 in direct costs—not counting the engineering, design, marketing, and product time invested.

No artists earned enough from usage to receive additional royalties beyond their advance. The pre-generation economics never reached a meaningful scale.

We came close to an Enterprise contract. A major US media outlet evaluated Tess as a compliant alternative to banning AI generation outright. Ultimately, however, their legal team blocked the CTO from moving forward with Tess as the unresolved court cases around AI copyright made any AI licensing product too risky to touch.

Finally, we lost some trust from our team as well, which always happens when you invest in a product that doesn't work out. One engineer who left Kapwing in fall of 2025 said that the short-lived Tess investment contributed to burnout.

Why we shut it down

Three factors converged, leading us to shut down Tess:

- The legal argument was not proven out. Despite our legal argument about copyright protection, the ongoing litigation kept the question open. Organizations like Forbes and the SF Standard who had shown interest in our model couldn’t afford to bet on an unresolved legal argument. Without regulation clarity, Tess couldn’t compete with models like NanoBanana, DALLE, and Midjourney.

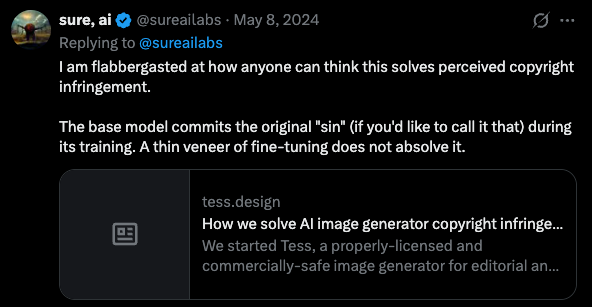

- The timing wasn’t right. We depended on artists helping us to promote the platform, and they didn’t. When we launched, Creative Bloq covered the story, but the Twitter reaction was largely negative. The cultural moment in 2024 was one of deep distrust toward AI among the creative community.

- We needed to focus on Kapwing. Unfortunately, given our current resources, we can no longer run Tess sustainably, especially as our startup credits have now run out. We moved the most useful Tess functionality into Kapwing’s AI Assistant, which enables artists to set up private Custom Kais to emulate their own style with reference images. With focused resources, Tess might have grown. But with split attention, it could not.

What This Means for Entrepreneurs Building AI Licensing Models

The underlying model—paying human creators a royalty to license their style, voice, likeness, or training data to fine-tune AI—is not dead. It's early. Here's what I'd tell founders exploring this space:

- Creator adoption is a two-sided marketplace problem, and supply is harder than it looks. Unlike music or stock photography, illustration has no dominant intermediaries. You have to recruit one artist at a time. Factor in that a meaningful fraction of your target creators will refuse on principle, regardless of the compensation.

- Brand dilution is a real product problem. Creators with valuable styles need meaningful controls over how those styles are deployed and appreciate visibility. Build custom moderation guardrails into your product to differentiate.

- Timing matters. We launched Tess into a cultural moment of maximum hostility toward AI among artists. That's changing. As the legal framework solidifies and creator attitudes shift, the same business model will face less friction.

- Focus on one thing at a time to move faster. We chose to explore a pivot, not realizing the investment that it required. And the result was that we slowed down on Kapwing. Although we’ve recovered with the launch of Kapwing AI, I regret that we did not put all of our eggs in one basket.

Closing Thoughts

I’m proud of Tess and what we built. We genuinely attempted to move the industry toward a more equitable AI licensing model where human creators get a cut of the value that they generate, and where brand/publishers have an easier time defining the output they want. If Tess had succeeded, it would have created a more beautiful world with more accessible art for all.

When I got married in 2025, I used Tess to generate all of the artwork: the invitation, the table placards, and the textures for the table chart. We also put up art made by our artist friends like Baffour Kyerematen, Gloria Ford, and Abby Cali. Tess will always remind me of my wedding day and the beautiful coexistence of AI art and human-made art.

Thank you to each of the 142 customers who took a bet on Tess and to the artists who trusted us with their work. We’re sorry we couldn't build the thing large enough to sustain it. I hope that an entrepreneur takes another shot at this in the future.

If you’re building in this space, reach out. I’m happy to share more of what we learned. We'd also be open to selling the Tess.Design domain and concept so that the idea can live on.

Read the original article

Comments

By petterroea 2026-03-106:315 reply > The timing wasn’t right. We depended on artists helping us to promote the platform, and they didn’t.

There's a certain arrogance to believing the timing "simply wasn't right". It looks really bad if you try it with any recent controversy:

* "The timing wasn't right to charge people for heated car seats"

* "The timing wasn't right to make Photoshop a subscription service"

* "The timing wasn't right to increase fees"

It's a way of talking yourself away from the fact that what you are making may, inherently, be disliked. The cited survey even seems to have been read as favourably as possible:

> Surveys consistently showed that consumers believed artists deserved payment when AI generated content in their style.

This doesn't mean people want artists style to be generated by AI. It could mean they think it's horrible, but if it happens they should at least be compensated for it. In fact, the quotes survey even says 43% believe companies should ban copying artists styles. I could make the exact opposite argument with the same data:

"Many consumers believe companies should ban copying styles, and this may be a more common opinion than measured as most people have no experience with modern AI tools and therefore no chance to have made an opinion yet. What is known is that the majority believe that if artists were to be copied, they should at least be compensated"

edit: formatting, typo

Making Photoshop a subscription service was an extremely successful business decision, so I'm not sure what the comparison is supposed to mean here.

I say this as someone who switched to Krita and canceled CC subscription.

"extremely successful business decision" and "inherently disliked" can both be true. Increasing fees quite often works out for the business too, but consumers don't generally like it.

> consumers don't generally like it

I'd prefer looking at what (potential) consumers actually do rather than what they say. "Saying" is a really weak signal.

By NikolaNovak 2026-03-1012:55 Yes, and, op's point stands.

I am one of those people: 1. Absolutely despise the lightroom being subscription and 2. Haven't switched yet.

There are moats and capabilities and friction. Not every vote with your wallet is a ringing endorsement. I have 15 years of lightroom databases over 100k photos so switching is hard. At the same time those are from the time I did a photography side gig, now I don't so monthly cost for no monthly gains really peeves me.

So it absolutely is a successful business decision and it absolutely is widely despised by customer base. Both are true :-)

By SiempreViernes 2026-03-109:382 reply Ok mr sceptic, where are your numbers showing consumers buy more of a thing after it becomes more expensive?

Was merely commenting on the observed preference vs stated preference issue (aka "the Say/Do Gap"), not the underlying point about raising prices.

By isnxjsjdn 2026-03-1014:07 [dead]

By skeeter2020 2026-03-1014:08 Apple has done pretty well historically walking the line between mass consumer and Veblen good. Without them I don't believe we'd see the same variety of high-end devices, they somehow convinced a lot of people that the price of a phone is a signal of it's value.

By isnxjsjdn 2026-03-1013:58 [dead]

By noosphr 2026-03-108:47 Enshificstion kills companies slowly, then all at once.

I agree, to an extent. It reminds me of the Simpsons meme[1]: it's the children who are wrong!

But sometimes timing is indeed wrong, not because of anything you did, but just because no one wanted it _yet_. Google Glass from a few years ago comes to mind. Now Meta has a similar idea and it does seem successful, much to society's dismay.

But sometimes it is worth asking, "does the idea actually suck and that's why no one likes it? Or is it actually a good idea that is muddied with other issues that no one likes?"

The article doesn't make it clear that they were that introspective.

yeah I agree -- There are countless examples with almost every invention of someone else who got there first but couldn't market it successfully at least partially because of timing.

By skeeter2020 2026-03-1014:041 reply For most examples you need to squint pretty hard to make them "the same" though. In a world of "x, but for y" these are essentially new inventions, it's only our human brains in hindsight that look for the continuity. It's also an interesting question to ask if you can really seperate the idea from the execution when defining an "invention".

By pwillia7 2026-03-1014:40 fair point

Speaking in generalities, we underestimate how many things fail due to circumstances like "the market wasn't read for it." (In contrast to the more dramatic and common "all great success stories are due to leaders singularly imbued with unique and ineffable Greatness and Genius.")

Yeah, ten or so years ago, Google glass flopped spectacularly. Now for whatever reason, Meta Raybans are actually somewhat successful, much to the world's dismay.

Bad timing can be to a number of factors, some can be good, some can be bad.

By codechicago277 2026-03-1013:22 Google Glass looked dorky, Meta Ray-Bans look cool.

By isnxjsjdn 2026-03-1014:01 [dead]

> What is known is that the majority believe that if artists were to be copied, they should at least be compensated.

I get the emotional side of this argument - artists going hungry while someone else cashes in on their ideas. But compensation is a dangerous premise, because derivative art is an established type of artistic freedom. Artists routinely mimic styles, or work within the bounds of styles established by masters, but they've never been expected to compensate those styles' pioneers. Imagine it as a precedent:

"Your stuff borrows from Warhol? Guess what buddy, you owe the Warhol estate x% of your sale."

Perhaps you're arguing things change when commercial interests are involved? But again, this has never been the case for advertising companies (with their hired artistic guns) or any kind of graphic design leaning on established artistic styles for effect and making a killing in the process.

In the case of AI, even if it has a commercial master, it seems much closer to the borrowing of an ordinary artist. It's a trained entity, with deep understanding of styles, capable of making new works. On top of that, it works under the instruction of a user with their own ideas, whose guidance is crucial in deciding the work's final state. The user is the artist here - like one of the visionaries who delegate the nitty gritty of production to helpers. In this case the helper is leased from the AI company, which is more like an agency supplying those helpers.

All in all it's hard to see how any compensation model wouldn't end up constricting the artistic freedom most of these artists depend on.

You can’t derive works at scale manually. We’re talking about a machine here.

By rickydroll 2026-03-1014:48 I suggest you look into the history of art, specifically how artists have operated studios or "factories" where apprentices produced replicas of the master's work. Modern artists have numbered prints, where they reproduce their work at scale to as a revenue source. Sol Lewitt produced instructions for his art, and people reproduce them as public murals all over the place. see: https://massmoca.org/event/sol-lewitt-a-wall-drawing-retrosp...

By squidbeak 2026-03-1014:56 The 'machine' existed long before the AI companies in university art studies, galleries and republication, and the scale came from the graduates or ordinary acolytes borrowing wholesale the ideas and techniques they admired. Scale shouldn't alter the principle. Once there's a right to compensation established for derivation, you have to explain why it doesn't apply to the millions of artists making a living from exactly that.

By isnxjsjdn 2026-03-1014:05 [dead]

It’s not arrogant to be firm in your beliefs. You’re not arrogant for believing the timing is never right. You may even be 100% right, but you don’t have to belittle or put down the other side. In this case, they already lost, what more do you want?

By petterroea 2026-03-107:141 reply How is it not arrogant to be firm in your belief, even if signals say otherwise? If I believe it is OK not to shower, and everyone around me complains about it, is it not arrogant of me to ignore the signals because "they just don't understand yet"?

I think a much more useful question is whether some arrogance is necessary to succeed. I personally think it is. But we are discussing a post mortem here, and the author is (in my opinion) clearly beating around the bush and using "the time wasn't right" to hide what may be uncomfortable truths.

Is a post mortem valuable if it doesn't address these face first? I am not the one with all the answers here, but what I am used to in mature tech teams is that the uncomfortable parts are usually the most important in any post mortem.

There are plenty of stories about companies that failed because the timing was wrong, and then see another company succeed in their place later on. That doesn't mean failure simply means "the timing was wrong" - you are putting a lot of weight on society adjusting to your belief. Consider that venture capital often invests in hundreds of founders like this, betting that at least one of them wasn't wrong. That's not statistically in your favor.

It is OK (in fact it is valuable) to fail and conclude that your signals may have been wrong. There's a reason some venture capital funds prefer investing in people who have failed before.

By codemog 2026-03-108:32 Personally, I don’t know how you can say the timing was never right and will never be right at any point in the future. That frankly seems impossible, unless it was something like a B2B SaaS that gouges out your eyeballs, but I guess we’ll agree to disagree.

By wolvesechoes 2026-03-109:20 > It’s not arrogant to be firm in your beliefs

I mean, if you keep ignoring stuff that undermines your beliefs that's the definition of arrogance.

By antonvs 2026-03-109:11 If your beliefs are in conflict with reality, then holding them firmly may indeed be arrogant.

> Surveys consistently showed that consumers believed artists deserved payment when AI generated content in their style.

It's interesting that "consumers" are generally for the expansion of IP laws. At at the moment, I'm fairly certain that "style" is not something protected by Copyright. I personally do not want this, and I'm sure there are likely many like me. Poorly thought out IP laws lead to chilling-effects, DRM, stupid and unnecessary litigation, and ultimately a loss of digital freedoms.

> What 325 Cold Emails to Artists Taught Us

I'm surprised 1% didn't respond with "EAT HOT FLAMING DEATH SPAMMER" for sending them unsolicited commercial email. ;)

Trying to protect a particular style is just unworkable for obvious reasons. The only solution I can think of is requiring AI companies to license all of the content they have in their training set so artists get paid for the training rather than trying to work out which source material links to which outputs which is impossible.

When I buy a book, I don't buy a license to read it, I don't sign an EULA that says I won't scan it, digitize it, or write a program to analyze the word frequencies it contains. Do you want buy a license to read a book, because this is how you get there.

By squokko 2026-03-104:18 The law has always been able to recognize a distinction between Hunter S. Thompson reading Ernest Hemingway and learning from his style and a billion GPUs reading a billion books to be able to produce it on demand. It takes time for the law to catch up to the technology but it will.

You don't sign an EULA saying you can't do those things because scanning then distributing is already prohibited by copyright. The way to start a license war is to keep the status quo of these companies being able to ingest and essentially reproduce human work for free. One of my big worries about AI is that it will accelerate companies locking everything down and hoarding their own data.

By pastel8739 2026-03-106:32 I suspect it’s already has a dampening effect on individuals sharing. It leaves a bad taste in the mouth to know that anything you share intending to help fellow humans will immediately be ripped and profited from by companies that want to take your job and profit from it.

The old rules were built on based on old capabilities and and old reality which no longer exists.

This is a deceptive line of dismissal. Sound principles needs to be figured out before imposing any kind of restriction on art - "things have changed" doesn't cut it.

By Gigachad 2026-03-1021:33 Figuring things out is exactly what needs to happen. I think it is valid to dismiss arguments of “this is how copyright has always worked” when those rules were written before AI completely changed the game.

In Spain books include a copyright notice explicitly prohibiting reproduction and digitalization and alluding to article 270 of the Spanish criminal code.

By franciscop 2026-03-107:031 reply The book can say anything it wants, whenever it's true and/or applicable in court later on is a very different matter. Spain's SGAE is a very powerful lobby but still needs to follow the law.

Edit: haven't followed the law in a while, but you could definitely copy, digitalize and scan documents for yourself and your friends (copia privada).

By aerhardt 2026-03-107:35 In Spain EULAs cannot infringe upon the law either.

By add-sub-mul-div 2026-03-104:22 Perhaps it's that the transaction for you, an individual not explicitly profiting from the work, should be treated differently than a corporation using a work solely to profit from it.

By throwawaysoxjje 2026-03-105:29 Of course you don’t, because it’s not the EULA that enforces the copyright. Copyright law is what enforces the EULA. It’s right there in the fact it’s a Licensing Agreement.

The problem isn’t the reading. The problem is the output based on somebody’s other work.

There is a reason why we call it styles, because it’s a recognizable pattern someone came up with maybe after decades of work.

The "funny" thing is that we absolutely allow people to copy style... but somehow software isn't allowed to do that?

You don't even need to have a legally acquired source material to produce work in a certain style.

The new reality allows for original creators to actually track the chain, so we're in this situation.

By croes 2026-03-1011:43 If people would have the same mass output as software we wouldn’t allow that too.

If one or two people take an apple from your tree it’s not a big deal, if a machine takes 10,000 it is.

By mitthrowaway2 2026-03-105:52 When I buy a patented product I don't sign an EULA that says I can't manufacture and sell a copy, but I still can't manufacture and sell a copy.

By squidbeak 2026-03-1015:15 It's even worse than that. You then have to pay an additional fee to use its ideas as inspiration for your own book.

By esafak 2026-03-105:19 It is not an individual buying the book but a corporation, with the purpose of being able to create imitations of it, and all other books.

By saaaaaam 2026-03-106:33 Copyright quite literally protects the act of copying or reproducing a work protected by copyright. And you are technically entering into something akin to an end user licensing agreement when you buy a book, the only difference being that the EULA is incorporated into law on an international basis through reciprocal copyright treaties.

So if scan a book you are making a copy. In some copyright jurisdictions this is allowed for individuals under a private copying exception - a copyright opt out, if you like - but the important thing is private use. In some jurisdictions there is also a fair use exception, which allows you to exploit the rights protected by copyright under certain circumstances, but fair use is quite specific and one big issue with fair use is that the rights you are exploiting cannot result in something that competes with the original work.

Other acts restricted by copyright include distribution, adaptation, performance, communication and rental.

So if you copy a book, digitize it, and write a program to analyze the word frequencies it contains you may, in some jurisdictions but not all, be allowed to do this.

If you’re doing it locally on your own machine you are simply copying it. If you do it in the cloud you are copying it and communicating the copy. If you copy it, analyze it and train an AI model on it that could be considered fair use in certain jurisdictions. Whether the outputs are adaptations of the training data is a matter of debate in the copyright community.

But importantly if you commercialise that model and the resulting outputs compete with the copyright protected material you used to train, your fair use argument may fail.

So when you buy a book you are actually party to what is effectively a licence granted by the copyright holder, albeit it to the publisher. But as the end user of the book you are still restricted in what you can do with that copyright protected work, through a universal end user licence encoded in law.

The cumulative license fees required to properly compensate all artists is so absurd that it will probably genuinely burn down the entirety of global economy if paid. The only solution I can think of is to burn down just the AI to be revisited later to be rebuilt as a tool that won't require absurd amount of training data, that also leave a lot more to its human operator beyond merely accepting literal categorical descriptions that are fundamentally tangential to artistic values of outputs.

And I think same could happen to LLM. If it took all the fossil fuel on Earth just to barely able to drive a car to a car wash, there's more things wrong with the car than in the oil price.

> is so absurd that it will probably genuinely burn down the entire global economy if paid.

Where did you get that idea. Global economy is ~200T/year PPP. 0.1% of that split across every artist you want the training data from would be insanely difficult for the vast majority of them to turn down. Which makes sense as art isn’t that big a percentage of the global economy compared to say housing, food, medical care, infrastructure, military spending etc.

Obviously the incentive to take without compensation is far more appealing, but that doesn’t mean it was impossible to make a reasonable offer.

For all the people represented in the training data to receive royalties would be an incredible wealth transfer to the Extremely Online. My forum posts, StackOverflow answers etc are also contributing to the model outputs. The training data, by volume, mostly belongs to blog authors, redditors, Wikipedia editors, to us!

By nneonneo 2026-03-106:27 The people in that counting to infinity subreddit would get compensated a lot if this were fully automated - their posts were so overrepresented in the training set that many of their usernames became complete tokens (e.g. SolidGoldMagikarp).

I object to calling people chatting online artists.

However, ultimately nobody is going to pay them more than the value of their posts to the AI company which puts a severe cap on what that’s actually worth. People who post a great deal of online content might be worth compensating a few thousand dollars, but it would be hard for them to then turn that down.

I think the lower bounds of someone signing away rights to their whole art portfolio is more towards $1m than few thousands. Few k is just a month's salary that they can "make" themselves. Offers that small would be almost off-putting.

By Retric 2026-03-1017:06 That would be true if they gained the exclusive ability to reproduce works etc.

AI companies want a license to train not ownership of a portfolio.

By abustamam 2026-03-105:46 Hey finally my reddit and hn habit can be lucrative!

There are definitely >1m artists worldwide, some popular some less so, and $1M * 1m =1T, not 0.1% * 200T =200B.

Hard cap of 200B divided by 1M equals 200k, and that would be sure more reasonable, but we aren't hearing artists responding favorably to hypotheticals in that range, so I'm skeptical that "ain't nobody gonna turn that down".

This isn’t ownership it’s a license.

I think the vast majority would agree to let AI companies train on their art for 10k let alone 200k. Don’t forget the average global salary is way below what you see in the US.

Put another way how many people would turn down 6+ months salary. Of course the vanishing tiny percentage people care about would want more, but that’s a separate question and not particularly valuable to AI companies.

> Put another way how many people would turn down 6+ months salary.

Didn't that exact social experiment took place in the US last year? I thought the result of that was disastrous if media reports are to be believed.

OTOH I remember creator of Wordle closed the "low few mil" deal instantly, so I do believe it unlikely that people turn down few _hundred_ months worth of salary. But those artists are not from regions with 50-100x less median income and/or wider income distribution relative to US - I think they're concentrated in relatively high-income-low-disparity regions - so I don't think there's backwater wherever that lifetime income there is equivalent to no more than 6 months worth in US that has abundant supply of artists.

And IMO those artists are basically engaged in a geo-scale dumping of media contents. It's the same phenomenon as how moving consumer electronics manufacturing to US instantly multiply costs by small integers instead of just incurring premiums in percentages. If that phenomenon were to be quenched and those effects were integrated into economy anyhow, that will change the global balances of power to some statistically significant degrees, like, we'd be seeing flying rocket amphibian McBoatfaces everywhere. That might be interesting, but I'm not sure if that's an interesting kind of an interesting thing to see.

By Retric 2026-03-1018:53 Wordle involved actually selling the rights to something not just allowing AI to be trained on it while he kept the website.

That’s really not a reasonable comparison to what is being sought.

As to global artists, I was suggesting the majority of artists globally make ~20k USD or less per year as artists. To get to millions of artists you need to use a generous definition, so now Hollywood is full of actors how many of them made 20+k last year as an actor? If you disagree fine let’s double it and 6 months salary is still only 20k and would I suspect be a seriously tempting offer when you retain all rights to past and future works.

> The cumulative license fees required to properly compensate all artists is so absurd that it will probably genuinely burn down the entirety of global economy if paid.

That's kind of an interesting concept: "since the scale of my transgression was so big, I should get away with it scot-free."

By nickff 2026-03-106:44 That’s how eminent domain and regulatory takings work in most countries.

By franciscop 2026-03-106:59 "If it took all the fossil fuel on Earth" What do you mean? To TRAIN an LLM model it takes roughly the same amount of energy as to raise a person, so it's not even really expensive in energy costs.

By JAlexoid 2026-03-106:30 > I'm fairly certain that "style" is not something protected by Copyright

To a degree it is protected, but not by copyright. Design patents are a thing and companies have sued each other over them (Apple vs Samsung during the "smartphone wars" comes to mind)

Just out of curiosity, do you believe artists deserve to be compensated when their art is used to generate stuff in their style?

I'm staunchly against expansion of IP laws. But I personally think that when a corporate machine gobbles up an artist's works so that people like me who can't draw can generate silly memes for a few bucks a month, the artist should be compensated. The company is profiting off of other people's work! That's not right.

The mechanism by which compensation is calculated appears to be an unsolved problem currently though.

> The company is profiting off of other people's work! That's not right.

What's wrong with it?

We live in an interconnected world. Every company or individual who profits off anything does so, in very large part, thanks to work left behind by others that they don't directly compensate each other for.

Stated differently, if we look at the other side of the coin, it's one thing to create value, and another thing to capture value. If you are a business (and artists seeking profit are businesses), you create value then try to capture that value. Creating value and trying to capture (in the form of profit) is the entire name of the game. But no business captures 100% the value they create. If you make a product/artwork/service/whatever and release it to the public, lots of people may use it, view it, be inspired by it, learn from it, and ultimately profit off it in their own way without you necessarily being able to capture some part of it. And what's wrong with that?

Do we really want the entire world to be endlessly full of cookie-licking rent seekers who demand profit every time anyone does anything? Because they failed to capture the value they created, and thus demand a piece of the pie from those who are better at capturing value?

I like the way Thomas Jefferson put it:

> If nature has made any one thing less susceptible than all others of exclusive property, it is the action of the thinking power called an idea, which an individual may exclusively possess as long as he keeps it to himself; but the moment it is divulged, it forces itself into the possession of every one, and the receiver cannot dispossess himself of it. Its peculiar character, too, is that no one possesses the less, because every other possesses the whole of it. He who receives an idea from me, receives instruction himself without lessening mine; as he who lights his taper at mine, receives light without darkening me. That ideas should freely spread from one to another over the globe, for the moral and mutual instruction of man, and improvement of his condition, seems to have been peculiarly and benevolently designed by nature, when she made them, like fire, expansible over all space, without lessening their density in any point, and like the air in which we breathe, move, and have our physical being, incapable of confinement or exclusive appropriation. Inventions then cannot, in nature, be a subject of property. Society may give an exclusive right to the profits arising from them, as an encouragement to men to pursue ideas which may produce utility, but this may or may not be done, according to the will and convenience of the society, without claim or complaint from anybody. Accordingly, it is a fact, as far as I am informed, that England was, until we copied her, the only country on earth which ever, by a general law, gave a legal right to the exclusive use of an idea.

By sciencejerk 2026-03-106:381 reply Do we really want the entire world to be endlessly full of cookie-licking rent seekers who demand profit every time anyone does anything? Because they failed to capture the value they created, and thus demand a piece of the pie from those who are better at capturing value?

The starving artists I know would be extremely happy to get even one cookie to lick. I know an artist prodogy that paints on canvas and has work in a sizable gallery, at least one institution patron, and is constantly hosting paid workshop events. He architected and built his own custom 40ft ceiling pine art house covered in beautful stained wood and arches, with large metal cuttings and engravings of wild horses on the railings. This artistic prodigy is still starving and works as a handyman/construction worker part-time. He is strongly opposed to AI, by the way.

Most artists are "starving artists"; there are extremely few artists that can support themselves by their creations alone. Many artists make no money at all, and many artists seem to work or create alone as individuals, meaning that they almost always lack the funds or community resources to protect their creative work.

By csallen 2026-03-129:40 To the extent that you care about deriving money from your art, you aren't just an artist, you're a business. And that's fine. I love businesses and the people who start them.

But, sadly, art isn't an easy business. There is a tremendous supply of art, and not enough demand for it. In other words, it's very competitive.

So, just like every other competitive industry on earth, if you're going to be in the art business, then simply making a good product/service isn't enough. You have to think about marketing and sales and differentiation and distribution and strategy and the whole big picture.

There are plenty of starving artists who make incredible art that nobody pays for, just like there are plenty of starving startup founders who build well-coded apps that nobody pays for.

By pastel8739 2026-03-106:271 reply > Do we really want the entire world to be endlessly full of cookie-licking rent seekers who demand profit every time anyone does anything?

No, far better that we have four rent-seekers who gobble up everything that anyone is naive enough to share with the world, then turn around to demand profit in order to keep up with the new pace of the world that they’ve created.

But original artists being rent-seekers is OK, right?

PS: I categorically disagree that AI developers are rent-seekers, unless they require rent for the products their models generate

By JoshTriplett 2026-03-108:062 reply I'd love to see copyright abolished. As long as it continues to exist, however, AI should not get an exemption; that would directly advantage a few large companies over everyone else, giving them a special privilege to violate licenses that nobody else gets.

By csallen 2026-03-129:36 What's the exemption? To me it seems like we're trying to do the opposite, make the laws even more stringent for AI than we do for people.

Imagine a genius person went out, trained himself by studying all the great art, and in doing so gained the ability to paint or draw in just about any style. That wouldn't be a copyright violation. In fact, plenty of people do this, or some version of it. But we say it should be illegal for AI... why, exactly? Because artists don't like it? Because it's "too good"?

If we're going to keep copyright around, then imo what should be illegal here is using the AI as tool to create copies of people's work. Like, if I go out and use AI generate a book of poetry and it's got a bunch of Beatles lyrics in it, fine, sue me.

By abustamam 2026-03-1010:31 Yeah, that is true. I'd be on-board with abolishing copyright then.

By onion2k 2026-03-107:28 It's interesting that "consumers" are generally for the expansion of IP laws. At at the moment, I'm fairly certain that "style" is not something protected by Copyright. I personally do not want this, and I'm sure there are likely many like me. Poorly thought out IP laws lead to chilling-effects, DRM, stupid and unnecessary litigation, and ultimately a loss of digital freedoms.

Kapwing is specifically designed for artists to share IP with other people in an IP-friendly and financially profitable way. A 'consumer' on Kapwing is not the same as an ordinary person browsing for AI generated art, and the fact that people who make money from selling their IP on there are in favour of expanding IP law shouldn't be a surprise.

All this really tells us is that Kapwing's artist community believe protecting their individual art style is more valuable to them than any money they'd earn from licensing it on a per-image basis to Kapwing's AI tool. I'd be willing to bet that if Kapwing changed the offer to a flat-fee-of-$50,000-a-year-plus-per-image-fee they'd find 99% of artists on there changed their minds. As with most things, people feel strongly about their rights all the way up until the price is right.

By maplethorpe 2026-03-105:113 reply It's interesting you interpret the consumer's response as a desire for the expansion of IP laws. As an artist whose work exists in many of these training sets, I'm of a different opinion: IP laws can stay the same, but they should have purchased a license to use my art before including it in their training data.

Since the didn't, they should go to jail. The same way I would have gone to jail if I built Sora in my basement and sold it to the public.

As an artist your license didn't ban learning from your work. Unless your content was acquired without a license at all - you absolutely gave them permission to use it in training sets.

That is the gap in the legal landscape.

By maplethorpe 2026-03-107:571 reply No I didn't. It's use in a software product without my permission. That's never been allowed.

Just because you obfuscate what's happening by calling it "learning" and pretending your model is actually just looking at pictures the same as a human, doesn't make it true.

By JAlexoid 2026-03-1011:32 Unfortunately you did grant that permission. Once you granted the permission for someone to hold a copy, they have the permission to process it.

I can assure you, that you didn't grant a license with an exclusive list of operations that can be performed on your work of art. At best you may have had something like "no commercial use" clause and general broad terms.

By visarga 2026-03-105:16 I thought it was at most a monetary fine, do people go to jail for copyright infringement? But you seem to want to own all the air around your work, the ground beneath it too. Nothing can exist around it, so a creative person would do better to avert their eyes rather than loading useless ideas. Why should I install in my brain your "furniture" when I am not allowed to sit on it? In these cases I think authors provide a net negative to society by creating more works that further forbid others from creating in the same space.

Here, for example, any comment is open to read and respond to. On ArXiv any paper can be downloaded, read and cited. Wikipedia contains text from many thousands of editors, building on each other. We like collaboration more than asserting our exclusivity rights. That is why these places provide better quality than work for direct profit or, God forbid, ad revenue, that is where the slop starts flowing.

By protocolture 2026-03-105:225 reply >IP laws can stay the same, but they should have purchased a license to use my art before including it in their training data.

But including your art in the training data is fair use (or otherwise exempt) by most standards, as no reproduction occurs. You are advocating for a change to IP law to make it more restrictive.

By JoshTriplett 2026-03-108:043 reply > But including your art in the training data is fair use

The four factors of fair use in the US:

> the purpose and character of your use

Commercial, for-profit. Not scholarship, not research, not commentary, not parody, etc.

> the nature of the copyrighted work

Absolutely everything. Artistic, creative, not purely factual.

> the amount and substantiality of the portion taken, and

All of it, from everyone.

> the effect of the use upon the potential market.

Directly competing with those whose data was copied.

By rcxdude 2026-03-109:21 3 and 4 are what that argument is based on, I believe. 3) on the basis that the output is not _reproduced_, and 4) on similar grounds that output that's just not at all the same as the input data isn't affecting the market for the original image (I think this is the more debatable one, but in general the existing cases have struggled at the early stages because the plaintiffs have not been able to actually point to output that is a copy of their part of the input, and this does actually matter).

By protocolture 2026-03-123:331 reply >Directly competing with those whose data was copied.

An LLM doesnt compete with Art the same way that Photoshop doesnt compete with Art.

>All of it, from everyone.

With the result that anything produced by the LLM does not reproduce any single source in its entirety (and where compelled if they are able to do that is a bug not a feature)

Fair use is too specific tbh, rather than ruling it fair use (which seems to be where things are going) it should just be ruled "use". There's nothing wrong with building a mathematical model using available data.

By JoshTriplett 2026-03-124:34 > An LLM doesnt compete with Art the same way that Photoshop doesnt compete with Art.

Yes, it does. Many people are using AI-generated works in places where they originally would have either paid an artist, programmer, or other creative professional, or done without. Many companies are claiming to reduce staff because of AI (whether that's true or an excuse). There is plenty of evidence that AI is directly competing with various individuals, businesses, and industries.

> With the result that anything produced by the LLM does not reproduce any single source in its entirety

You do not have to reproduce sources in their entirety to produce derivative works.

By oreally 2026-03-108:59 > the amount and substantiality of the portion taken, and

> All of it, from everyone.

Yea I'd like to see how drawing two circles violates the copyright of drawing one circle!

Fair use by most standards? Which standards are those? I don't think a standard about training an AI on billions of images exists.

By the same 'transformative' standards that allow satire, reaction and commentary videos to exist. And those take 100% from the source and add context, whereas good generated AI images that aren't wholesale copying take like less than 10% from the original source.

In addition, the idea that you need to pay rent on *your observation* of someone else's work is absurd. No one pays Newton's descendants for making lifts or hosting bungee jump sport activities.

By maplethorpe 2026-03-1012:191 reply > good generated AI images that aren't wholesale copying take like less than 10% from the original source.

So would the model work if it only trained on the top 10% of pixels in every image? Or do they in fact need the entire image before they begin processing it, and therefore use the entire image?

> In addition, the idea that you need to pay rent on your observation of someone else's work is absurd.

I agree that's absurd. But training a model is no more "observing images" than an F1 car is "walking" down a race track. Just because a race car uses kinetic energy, gravity, and friction to propel itself, the same way a human does, doesn't mean it's doing the same thing as a human. That comparison you're making is the real absurdity.

By oreally 2026-03-1013:41 > So would the model work if it only trained on the top 10% of pixels in every image? Or do they in fact need the entire image before they begin processing it, and therefore use the entire image?

The model works by training on what features humans can make sense out of the image they're presented with, if the image and the observations of the image's feature were clear/observable enough. Then the generation makes use of those observations. I'm just using 10% as an arbitrary number to describe proportions. If the generation were 100% of the observations from the same image, the model would be overfitting, and many would have deemed it to have produced a copy.

> Just because a race car uses kinetic energy, gravity, and friction to propel itself, the same way a human does, doesn't mean it's doing the same thing as a human.

WTF does this even mean? A race car uses concepts from Newton, just as how a human uses gravity to train it's muscles to move be it knowingly or unknowingly. But you don't see them (car makers/humans) paying rent to Newton after he discovered gravity. Come on!

Is it transformative if I take all the pages in Hanya Yanagiharas A Little Life and use a thesaurus to change every second word?

Or a more realistic scenario: what if I translate it to Spanish without license from the author? That's not allowed, and yet I have "transformed" the work in the same way that an LLM does.

By protocolture 2026-03-1022:37 If I buy a book entitled "How to make a table" and then make a table, the author does not own the table I made.

If I buy a book and use it to prop up a table, the author likewise does not own the table, or any works I undertake on that table.

If I buy a book and rip out the pages to make a collage, the US is the only legal jurisdiction where I run even slight risk of civil penalties.

An LLM is downstream of a book. Using a book to make an LLM does not confer any rights or privilges towards the LLM on the original author, just as using a hammer or nails dont permit the hammer or nail manufacturers any royalties on what I make, even if I build a hammer making machine with them. Theres no right to the works of people who build on your work without reproducing your work, at least outside of strict copyleft.

Its like demanding a cut from people who learned how to use photoshop by watching your photoshop tutorial youtube videos.

This is why the most successful cases against LLMs have been on the "Did they purchase the book" side of the fence, and not on the "What did they do with it" outside of the one case, where the legal company tried to use the LLM to 1:1 reproduce the content they had a limited license to, but thats obviously a no go and they should have known better.

These are my opinions ofc.

> Is it transformative if I take all the pages in Hanya Yanagiharas A Little Life and use a thesaurus to change every second word?

If you meant it literally.. I'd think that such a version would be a sort of parody. It'd be up to lawyers doing their cross-examinations to prove the work was intended for such a purpose though..

> Or a more realistic scenario: what if I translate it to Spanish without license from the author? That's not allowed, and yet I have "transformed" the work in the same way that an LLM does.

Probably a lawyer would answer this better than me, but the 'content' is the same and would violate copyright. There's also other factors, like if it was translated/distributed for free.

Besides that I regard that LLMs to hold mathematical observations in contrast to a translated work. So long as the user ensures the output isn't close to what's already available imo it fits the transformative criteria.

You cannot claim that a formulaic thesaurusing of a text is parody, not unless the process is related to the message of the original text itself. Even then, that's a dubious claim. Especially if it was done automatically.

I can just as well say that a translated work contains "linguistic observations". In fact a translator has to do a lot of transformative work in order to translate a text.

An LLM just takes a set of texts, looks at n-gram distributions, and generates similar text. It is quite literally a fuzzy way of copying. There aren't any mathematical observations in the output. Any math (statistics) is done in the copying process.

By oreally 2026-03-1110:14 > You cannot claim that a formulaic thesaurusing of a text is parody, not unless the process is related to the message of the original text itself. Even then, that's a dubious claim. Especially if it was done automatically.

Oh even if it's not a parody it would look transformed enough that a first-time reader would be getting a completely different interpretation of the story* compared to the original source. And that's all that matters.

> There aren't any mathematical observations in the output. Any math (statistics) is done in the copying process.

Wrong. Weights, which these models comprise of, are literally numbers to an extensive mathematical equation.

> It is quite literally a fuzzy way of copying.

And no one knows/there is no consensus on what a 'fuzzy way of copying' is. It is either copying or it is not. You could say that training an LLM is abstracting and integrating various text into it's weights, hereby transforming the source material and again transforming it a second time via integrating it into its weights.

By protocolture 2026-03-1122:43 >It is quite literally a fuzzy way of copying.

Even if it involved copying that isnt immediately an issue. Its the distribution of a copy thats an issue. And if you look at the data side by side, you can see that while copying might be part of the process of creating an LLM, the LLM is not a copy of its source material.

By protocolture 2026-03-1022:291 reply Google scrapes the entire internet to generate a searchable index of the internet. But the resulting search engine is only infringing where it reproduces entire copies of scraped news articles and images. Both places where they have been put back in their place through legal means.

Like LLM's, it retains the produced index but not the original data.

The big concern is whether producing an LLM is competing with artists directly, but as artists dont make LLMs, this seems to be consistently ruled as non competing.

By abustamam 2026-03-116:11 I don't quite follow. People don't go on Google and search for midieval history and pretend they wrote the Wikipedia article on it because they found it on Google.

People _do_ use LLMs to make art in someone else's style (knowingly or unknowingly) and claim it as their own creation.

Also, I wouldn't say the creators of LLMs are competing with artists. The users of LLMs are. Arists don't make LLMs, they make art, and people who use midjourney and such make art.

But I'd argue that creators of LLMs are still liable for the harm people cause using their tools. Perhaps not legally, but certainly ethically.

By heavyset_go 2026-03-105:45 No precedent has been set when it comes to training and fair use

By throwawaysoxjje 2026-03-105:35 Which case decided that?

By bluefirebrand 2026-03-105:431 reply > But including your art in the training data is fair use

It shouldn't be!

By protocolture 2026-03-1022:40 Why? I dont undertand this take at all.

By SpicyLemonZest 2026-03-104:261 reply I don't think you can infer consumer positions on IP law from positions on who ought to get paid or how much they should be paid. Many of those same consumers, and indeed many of the artists, feel that fan art of your favorite characters should be legal and unrestricted so long as nobody's making too much money off of it.

By spudlyo 2026-03-104:43 You're right. It's wrong to think that all of those people are busy writing to congress demanding new laws be enacted. The problem is, the vast majority of people (while possessing a vague sense of right and wrong) do not understand how IP law works, and what the tradeoffs vis-a-vis the public good are. I'm sure many among the supposed consumers in this survey think something akin to "there ought to be a law" -- a sentiment somtimes echoed by readers of this very forum.

By gedy 2026-03-104:20 Yes this is where I fear big corps leverage hate for AI into adding even more nonsense copyright rules like protecting "style" which has never been under copyright in the US at least. Not defending AI scraping and training! But this will be abused even if no AI is involved.

By j16sdiz 2026-03-106:40 > It's interesting that "consumers" are generally for the expansion of IP laws.

It's not. This total depends on how you ask it.

Q: Do you think artists deserved payment?

A: YES.

Q: Will you pay for art?

A: MAYBE.

Q: Do you think people should go to jail not paying for art

A: NO.

By fennecfoxy 2026-03-1010:001 reply Yeah as a furry (exposed to commission scene a lot) the number of commissions available for "Disney" or "Pixar" or whatever style art even before the whole AI era really tells you that they're hypocrites.

As others have pointed out in this discussion, there's a big difference between some humans producing drawings in a given style and a machine producing millions of illustrations per day in that style.

I have rarely been as disheartened as I am by the transformation of Studio Ghibli's beautiful art style, painstakingly developed over decades, into a heap of slop-trash that actively erases the human connections so artfully depicted in Hayao Miyazaki's work.

All that sorrow and it's not even my style.

So, no - a human who's willing to draw an illustration in a particular style, perhaps one they live and admire, is not necessarily a hypocrite for seeing genAI's ability to produce billions of images in that style.

By fennecfoxy 2026-03-1215:50 I don't think mass production cheapens the handcrafted experience. Just because a factory can pump out cheap shoes doesn't mean it's no longer enjoyable to buy, own and experience a handmade pair of leather shoes; it's only that the reason for buying them becomes different (necessity vs. luxury).

The Ghibli thing is a great example; who's still actually doing that? It was a passing fad.

But let's not pretend that that passing fad has changed the fact that Ghibli's films are absolute masterpieces that will continue being enjoyed by generations to come. Because they are and still are.

By AnthonyMouse 2026-03-106:41 > It's interesting that "consumers" are generally for the expansion of IP laws.

Don't forget how polling works. Change the wording of the question and you get a different answer.

Try asking them if they think Comcast or Sony should be able to sue individuals for posting memes that don't even contain any copyrighted material.

I evaluated Tess.design about a year ago for an app I was building. At first I was excited because I wanted a service that compensated artists. However the number of artists was very limited and the blog post said “more will be added soon” but it had already been a year and it seemed like none had been added, not a good sign.

Then I tested out the image generation itself and I was unable to come up with prompts that achieved the kind of images I wanted. My only prior experience at the time was OpenAI API. With OpenAI I usually got what I wanted on the first or second try, but with Tess, I couldn’t get a usable result even after 20 tries.

So in addition to the limited number of artists, I think the quality of outputs vs. competing models was a huge factor. I needed to generate thousands of images, so I couldn’t afford to do dozens of attempts for each one.

Hopefully one day there will be a service that can match the quality of OpenAI Image API and Flux but with compensation for artists.

Yeah this just shows that ergonomics matters. I use Nano Banana and Grok Imagine to generate silly images for my friends and siblings (instead of reaction gifs I do reaction slop). The workflow is quite easy. Just plop in a prompt and usually the first image is good enough to share. Not that my standards are high anyway.

Would I pay extra to ensure that the artists that these models were trained on were compensated fairly? Absolutely! Would I pay extra for that but with degraded ergonomics? Given that this is just a silly hobby, probably not, if I'm being honest.

I think if that problem can be solved, and it's marketed to the correct group, a player in this space could certainly do well.

Most people can't even imagine the complexity it would require to actually build a system that correctly tracks down the sources for image generation. Not to mention that each image is generated from literally every single training image in a very small percentage.

It's not hard when someone inputs "create in style of studio ghibli" to say that studio Ghibli should get a cut. It's very different when you don't specify the source for the origin style.

And if you tried to identify the source material owner, the percentage of the output image that their work contributed to would be extremely - if not infinitely - small. You'd get minuscule payouts.

Most people can't even imagine the complexity required to build an LLM. But here we are.

We throw plenty of smart people at plenty of hard ideas. If a company really wanted to, they would find a way to make this feasible.

Funny thing is that building an LLM isn't as complex as you might think.

But the problem of attribution is easily understandable to any human with a modicum of intelligence.

Imagine that you have a trillion input images, with every single one having a source associated. When training they go through lots of processes and every single image contributes a varying degree to a subset of 8billion parameters. That alone would produce a dataset that is 1T * 8B to just say how much a particular image contributed to the output...

To mimic intelligence the output is also randomized - the association is not static and every single pixel in the output has it's own lineage.

So as you can probably imagine that to calculate the actual source weights on the output you'd require to do at least 8e+21 calculations per output pixel... and require double precision floating point while you do it.

We know how to do it. It's just ridiculously expensive.

(The above example is for demonstrative purposes only)

By abustamam 2026-03-1012:54 Insults aside, you chose a very expensive way to solve the attribution problem. But my rebuttal is simple: we are literally commenting in a thread about an AI image generator that paid people. It didn't work, but if a company I've never heard of can try an experiment like this, I'm sure our billion dollar AI overlords could if they wanted to.