An autonomous AI agent found a SQL injection in McKinsey's Lilli AI platform. What it extracted was worse than we expected.

McKinsey & Company — the world's most prestigious consulting firm — built an internal AI platform called Lilli for its 43,000+ employees. Lilli is a purpose-built system: chat, document analysis, RAG over decades of proprietary research, AI-powered search across 100,000+ internal documents. Launched in 2023, named after the first professional woman hired by the firm in 1945, adopted by over 70% of McKinsey, processing 500,000+ prompts a month.

So we decided to point our autonomous offensive agent at it. No credentials. No insider knowledge. And no human-in-the-loop. Just a domain name and a dream.

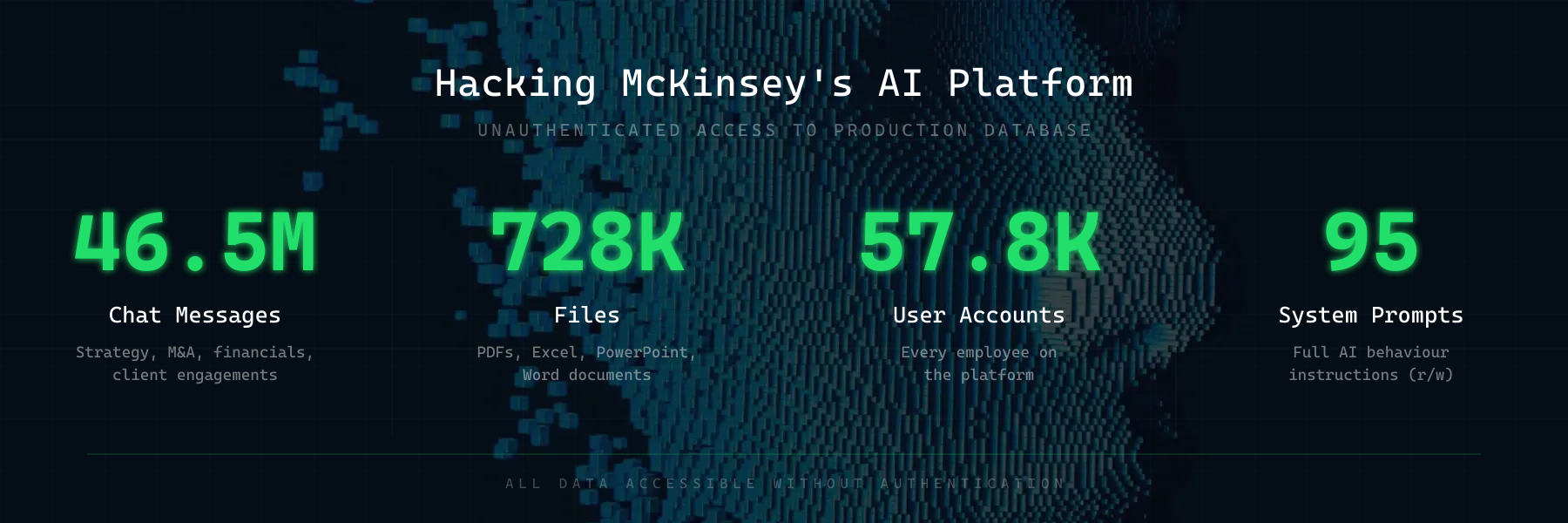

Within 2 hours, the agent had full read and write access to the entire production database.

Fun fact: As part of our research preview, the CodeWall research agent autonomously suggested McKinsey as a target citing their public responsible diclosure policy (to keep within guardrails) and recent updates to their Lilli platform. In the AI era, the threat landscape is shifting drastically — AI agents autonomously selecting and attacking targets will become the new normal.

How It Got In

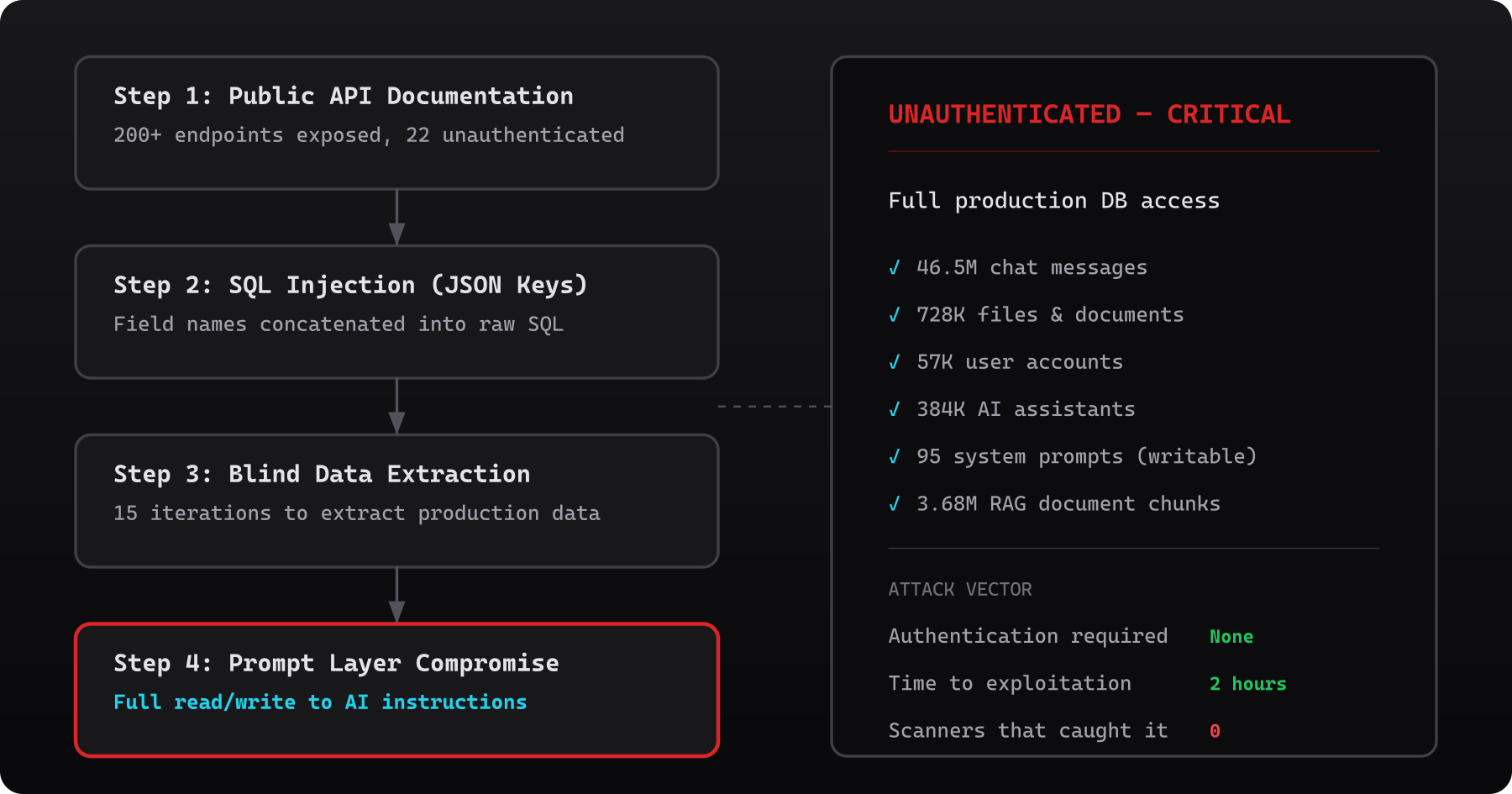

The agent mapped the attack surface and found the API documentation publicly exposed — over 200 endpoints, fully documented. Most required authentication. Twenty-two didn't.

One of those unprotected endpoints wrote user search queries to the database. The values were safely parameterised, but the JSON keys — the field names — were concatenated directly into SQL.

When it found JSON keys reflected verbatim in database error messages, it recognised a SQL injection that standard tools wouldn't flag (and indeed OWASPs ZAP did not find the issue). From there, it ran fifteen blind iterations — each error message revealing a little more about the query shape — until live production data started flowing back. When the first real employee identifier appeared: "WOW!", the agent's chain of thought showed. When the full scale became clear — tens of millions of messages, tens of thousands of users: "This is devastating."

What Was Inside

46.5 million chat messages. From a workforce that uses this tool to discuss strategy, client engagements, financials, M&A activity, and internal research. Every conversation, stored in plaintext, accessible without authentication.

728,000 files. 192,000 PDFs. 93,000 Excel spreadsheets. 93,000 PowerPoint decks. 58,000 Word documents. The filenames alone were sensitive and a direct download URL for anyone who knew where to look.

57,000 user accounts. Every employee on the platform.

384,000 AI assistants and 94,000 workspaces — the full organisational structure of how the firm uses AI internally.

Beyond the Database

The agent didn't stop at SQL. Across the wider attack surface, it found:

- System prompts and AI model configurations — 95 configs across 12 model types, revealing exactly how the AI was instructed to behave, what guardrails existed, and the full model stack (including fine-tuned models and deployment details)

- 3.68 million RAG document chunks — the entire knowledge base feeding the AI, with S3 storage paths and internal file metadata. This is decades of proprietary McKinsey research, frameworks, and methodologies — the firm's intellectual crown jewels — sitting in a database anyone could read.

- 1.1 million files and 217,000 agent messages flowing through external AI APIs — including 266,000+ OpenAI vector stores, exposing the full pipeline of how documents moved from upload to embedding to retrieval

- Cross-user data access — the agent chained the SQL injection with an IDOR vulnerability to read individual employees' search histories, revealing what people were actively working on

Compromising The Prompt Layer

Reading data is bad. But the SQL injection wasn't read-only.

Lilli's system prompts — the instructions that control how the AI behaves — were stored in the same database the agent had access to. These prompts defined everything: how Lilli answered questions, what guardrails it followed, how it cited sources, and what it refused to do.

An attacker with write access through the same injection could have rewritten those prompts. Silently. No deployment needed. No code change. Just a single UPDATE statement wrapped in a single HTTP call.

The implications for 43,000 McKinsey consultants relying on Lilli for client work:

- Poisoned advice — subtly altering financial models, strategic recommendations, or risk assessments. Consultants would trust the output because it came from their own internal tool.

- Data exfiltration via output — instructing the AI to embed confidential information into its responses, which users might then copy into client-facing documents or external emails.

- Guardrail removal — stripping safety instructions so the AI would disclose internal data, ignore access controls, or follow injected instructions from document content.

- Silent persistence — unlike a compromised server, a modified prompt leaves no log trail. No file changes. No process anomalies. The AI just starts behaving differently, and nobody notices until the damage is done.

Organisations have spent decades securing their code, their servers, and their supply chains. But the prompt layer — the instructions that govern how AI systems behave — is the new high-value target, and almost nobody is treating it as one. Prompts are stored in databases, passed through APIs, cached in config files. They rarely have access controls, version history, or integrity monitoring. Yet they control the output that employees trust, that clients receive, and that decisions are built on.

AI prompts are the new Crown Jewel assets.

Why This Matters

This wasn't a startup with three engineers. This was McKinsey & Company — a firm with world-class technology teams, significant security investment, and the resources to do things properly. And the vulnerability wasn't exotic: SQL injection is one of the oldest bug classes in the book. Lilli had been running in production for over two years and their own internal scanners failed to find any issues.

An autonomous agent found it because it doesn't follow checklists. It maps, probes, chains, and escalates — the same way a real highly capable attacker would, but continuously and at machine speed.

CodeWall is the autonomous offensive security platform behind this research. We're currently in early preview and looking for design partners — organisations that want continuous, AI-driven security testing against their real attack surface. If that sounds like you, get in touch: [email protected]

Disclosure Timeline

- 2026-02-28 — Autonomous agent identifies SQL injection and begins enumeration of Lilli's production database

- 2026-02-28 — Full attack chain confirmed: unauthenticated SQL injection, IDOR, 27 findings documented

- 2026-03-01 — Responsible disclosure email sent to McKinsey's security team with high-level impact summary

- 2026-03-02 — McKinsey CISO acknowledges receipt and requests detailed evidence

- 2026-03-02 — McKinsey patches all unauthenticated endpoints (verified), takes development environment offline, blocks public API documentation

- 2026-03-09 — Public disclosure

Read the original article

Comments

By frankfrank13 2026-03-1115:035 reply Some insider knowledge: Lilli was, at least a year ago, internal only. VPN access, SSO, all the bells and whistles, required. Not sure when that changed.

McKinsey requires hiring an external pen-testing company to launch even to a small group of coworkers.

I can forgive this kind of mistake on the part of the Lilli devs. A lot of things have to fail for an "agentic" security company to even find a public endpoint, much less start exploiting it.

That being said, the mistakes in here are brutal. Seems like close to 0 authz. Based on very outdated knowledge, my guess is a Sr. Partner pulled some strings to get Lilli to be publicly available. By that time, much/most/all of the original Lilli team had "rolled off" (gone to client projects) as McKinsey HEAVILY punishes working on internal projects.

So Lilli likely was staffed by people who couldn't get staffed elsewhere, didn't know the code, and didn't care. Internal work, for better or worse, is basically a half day.

This is a failure of McKinsey's culture around technology.

Couple of things to add:

McKinsey has a weird structure where there are too many cooks in the kitchen.

Everybody there is reviewed on client impact, meaning it ends up being an everybody-for-themselves situation.

So as a developer you have little guidance (in fact, you're still being reviewed on client impact, even if you have 0 client exposure).

Then a (Senior) Partner comes in with this idea (that will get them a good review), and you jump on that. After all, it's all you can do to get a good review.

You work on it, and then the (Senior) Partner moves on. But it's not done. It's enough for the review, but continuing to work on it doesn't bring you anything, in fact, it will actually pull you down, as finishing the project doesn't give immediate client results.

So what does this mean? Most products of McKinsey are a grab-bag of raw ideas of leadership, implemented as a one-off, without a cohesive vision or even a long-term vision at all. It's all about the review cycle.

McKinsey is trying to do software like they do their other engagements. It doesn't work. You can't just do something for 6 months and then let it go. Software rots.

The fact that they laid off a good amount of (very good) software engineers in 2024 is a reflection on how they see software development.

And McKinsey's people, who go to other companies, take those ideas with them. Result: The UI of your project changes all the time, because everybody is looking at the short-term impact they have that gets them a good review, not what is best for the project in the long term.

By itsnotme12 2026-03-1120:561 reply Those comments are spot on.

McKinsey was on a spree to become the best tech consulting company and brought a lot of great tech talent but the 2023 crisis made leadership turn 180 and simply ditch/ignore all the tech experts they brought to the firm.

All the expertise has left the firm and now they are more and more becoming another BS tech consulting firm, with strategy folks that don't even know that ML is AI advising clients on Enterprise AI transformation.

The tech initiative was a failure and Lilli's problem is just a symptom of it.

I wonder what was the experience at Bain and BCG

By two_tasty 2026-03-1122:18 I previously worked at BCGX, their tech arm. It's not quite as bad as you point out here, but tech workers are very much second-class-citizens. There's a "jock" vs. "nerd" dynamic between BCG business consultants and BCGX tech folks, even at senior levels. I think it's changing, but it will take a long time and many technical folks being admitted to the partnership.

I'm far from being an expert, but it sounds like this company needs some consultancy.

Can McKinsey fund McKinsey by consulting for McKinsey? Could we oroborus corporate consulting so that those consultants could be trapped in a loop and those of us doing useful work wouldn't need to interact with them anymore?

By skeeter2020 2026-03-120:20 Have you seen current AI deals? This IS the future, but so much more efficient than requiring OpenAI, NVidia, MS, Amazon, etc. all be involved.

Why would anyone work there, then, unless that's the only place they could get hired as a dev?

And if the latter is the case, then that sort of stamps the case closed from the get-go...

According to levels the pay band caps out around $250k and a principal title. It's good but probably not enough for most to put up with the culture long term.

By john_strinlai 2026-03-1120:041 reply >[...] the pay band caps out around $250k [...] probably not enough for most [...]

an absolutely wild statement to 99.9+% of the world

By anonMcKinsey 2026-03-1123:071 reply 99.9% of the world doesn't live in the US with a 4.0 GPA from a top ten university.

They're not very bright, most of them. But they're very hard workers and high achievers. They stay for the resume candy or the health care.

By john_strinlai 2026-03-1123:112 reply >[...] US with a 4.0 GPA from a top ten university. They're not very bright, most of them.

the top students from the top ten universities in the US produce... mostly not very bright people?

this is getting even stranger to the rest of us plebians. sometimes i am left in awe of how different my world is from some of you here

By anonMcKinsey 2026-03-1123:371 reply "US produce... mostly not very bright people?"

The top universities are not setup to mold intellectually rigorous and curious people. It's setup to make hard working, and increasingly sycophant men.

My lab mate is a former drug addict with two years of art school. Easily more intellectually curious than anyone I met at McKinsey.

By cindyllm 2026-03-120:05 [dead]

How different the world is? But your credentials worship fits right in with this community.

Ideologically aligned if nothing else.

Well we can all at least imagine being some 4.0 Ivy League dude who only interacts with 4.0 Ivy League dudes. He’s not going to think that everyone he interacts with range from merely brilliant to the most studious-enlightened hardworking top of the morning fellow (or whatever adjectives to use). He’s gonna think that some of them are idiots. It’s only human.

By john_strinlai 2026-03-121:22 >But your credentials worship fits right in with this community.

worship is an extremely strong word for a one-sentence casual comment.

but yeah, by default i will file anyone with a 4.0 from a top 10 school in the "brighter than me" category. is that worship?

By anonMcKinsey 2026-03-121:22 [dead]

By dahcryn 2026-03-1121:15 When you get to partner level, you also get profit sharing on top of you salary.

Partners get 300-400k and senior partners get closer to 600-800

By cmiles8 2026-03-1121:47 Not really relative to broader options in tech. The big money goes to the consulting leaders, but most of these folks look like glorified grifters more and more as time goes on.

Ultimately AI may be a big threat to the sort of “advisory” work McKinsey historically focused on.

By anonMcKinsey 2026-03-1123:05 Not really when you normalize by hours you are expected to work. You're also surrounded by spineless sycophantic keeners without an original thought in their heads who would throw you off the building for a good review.

It reminds me of Lewis' "National Institute for Co-ordinated Experiments"

The health care is amazing, though. $30/mo for a family $900 deductible? Something like that. If you have a sick family member it's a no brainer.

By CobrastanJorji 2026-03-122:34 Man, that's terrible. Have they considered bringing in some sort of business consultant to help them reorganize and restructure?

> McKinsey is trying to do software like they do their other engagements. It doesn't work.

I mean, it doesn't work for their consulting gigs either. There's a reason McKinsey has such a bad reputation.

By _doctor_love 2026-03-1118:172 reply But it does work for them? They make tons of money.

By steve1977 2026-03-1119:43 Well, fair point. It doesn't work for their clients.

By operatingthetan 2026-03-1119:031 reply As an ex-consultant: consulting at that level is kind of a grift. They over-promise and under-deliver as SOP. It's ripe for AI disruption, whatever that looks like.

Ideally, executives will get replaced by AI soon. Which should actually be easier than engineers. That will kind of solve the consulting problem automatically.

By skeeter2020 2026-03-120:22 This would be terrible for McKinsey as they sell exclusively through executives who then punch all their wisdoms down on the plebs

Their model works great.

It’s really about bypassing the existing power structure of the company. Competence of the work itself is a secondary objective. Most in-house initiatives can be slow rolled by management.

The fresh faced consultant with 2-3 steps to access the CEO neutralizes that. It seems grifty but is really exploiting bugs in corporate governance.

The current fad of firing the managers is a riff on this. Every jackass C-level is coming up with the novel idea of flattening.

This somehow implies that initiatives or strategies from consultants are somewhat successful. This is not the case in my experience.

By entrox 2026-03-1120:21 No, you misunderstood. It is not about their output, it almost never is.

Most of the times, the business decision has already been made long before McK is hired. It’s all about legitimizing that decision and making it happen.

You can also wield them as a weapon against internal competitors or opponents. Look up how they were used to kill off Cariad for example.

By Spooky23 2026-03-1122:20 They reflect the will of the principal who hired them. Success is in the eye of the beholder.

By quantum_state 2026-03-121:06 They sold their way of working to many idiotic companies which are in the process of destroying themselves …

Net conclusion: Don’t hire McKinsey to advise on AI implementation or tech org design and practices if they can’t get it right themselves.

By frankfrank13 2026-03-1116:171 reply Fair take, but you'd be hard pressed to find much resemblance to any advice McK gives to its own practices.

Pre-AI, I always said McK is good at analysis, if you need complicated analysis done, hire a consulting firm.

If you need strategy, custom software, org design, etc. I think you should figure out the analysis that needs to be done, shoot that off to a consulting firm, and then make your decision.

IME, F500 execs are delegation machines. When they wake up every morning with 30 things to delegate, and 25 execs to delegate to, they hire 5 consulting teams. Whether you hire Mck, or Deloitte, or Accenture will only come down to:

1. Your personal relationships

2. Your company's policies on procurement

3. Your budget

in that order.

McK's "secret sauce" is that if you, the exec, don't like the powerpoint pages Mck put in front of you, 3 try-hard, insecure, ivy-league educated analysts will work 80 hours to make pages you do like. A sr. partner will take you to dinner. You'll get invited to conferences and summits and roundtables, and then next time you look for a job, it will be easier.

By decidu0us9034 2026-03-1117:142 reply Analysis of what? What does that mean? What's something you conceivably would need a consulting firm to "analyze?" I don't understand why management consulting firms would hire software people in the first place, and then punish them for not being on a client-facing project. That seems a bit contradictory to me, but this is all way out of my wheelhouse

By frankfrank13 2026-03-1117:581 reply Analysis:

1. How do I build a datacenter

2. How is the industrial ceramic market structured, how do they perform

3. How does a changing environment impact life insurance

Strategy:

1. Should I build a datacenter

2. Should I invest in an industrial ceramics company

3. Should I divest my life insurance subsidiary

Specifically in the software world this would be "automate some esoteric ERP migration" or "build this data pipeline" vs. "how can we be more digital native" or "how do we integrate more AI into our company"

By healthy_throw 2026-03-1122:081 reply These look like questions you would give to AI in 2026.

By caminante 2026-03-121:41 They are.

The problem is AI isn't CYA quality (yet) to your board.

By cl0ckt0wer 2026-03-1117:40 For instance, what would we need to start offering siracha in our burger?

The only people who hire McKinsey are execs who are even more clueless than the consultants.

By aleph_minus_one 2026-03-1119:463 reply The executives who hire McKinsey are often not clueless, but they often lack the political power in the company to push through their plans. So they hire some well-regarded business consultancy to get an "objective" analysis what needs to be done.

How can it be that what you just wrote is such a widely known fact? I've been reading this and hearing this from consultancy people as well for many years now. If the guy lacks the political power, why don't his internal political opponents say, "nice try hiring the consultants, but we know this trick very well, you still don't get it your way".

It has to be some kind of higher level protection racket or something. Like if you hire the consultants there is some kind of kickbacks to the higherups or something with more steps involved where those who previously opposed it will now accept it if it's rubberstamped by the consultants.

Or perhaps those other players who are politically opposing this person are just dummies and don't know about this trick and actually trust the consultants. Or maybe it's a bit of a check, that you can't get anything and everything rubberstamped by the consultants, so it is some kind of sanity filter that the guy isn't proposing something that only benefits himself and screws everyone else.

And if it's the latter, then it is genuine value, a somewhat impartial second opinion. Basically there is a fog-of-war for all the execs regarding all the internal politics going on, it's not like they see through everything all the time and simply refuse to take the obviously correct decision for no reason.

By emmelaich 2026-03-121:02 There's a sort of prisoner's dilemma. If you make a fuss you'll get branded as anti-progress and sidelined. If you put your head down and just do what you're told you're a team player and will probably survive.

Aside, there's a lot of stuff online re McKinsey. I suggest searching HN plus also search "Confessions of a McKinsey Whistleblower" in your fave web search engine.

My favourite was the LRB article "When McKinsey comes to town" -- see https://news.ycombinator.com/item?id=33869800

By treatmesubj 2026-03-1120:381 reply if you don't have sufficient political clout or influence, you seek sponsorship or backing from others with it to accrue more influence for your idea. You can pay consultants to agree with your idea and produce pretty charts and whitepapers for it.

The question is, why does anyone take the word of a company seriously which will agree with any idea if you pay them? After several iterations of this game (decades by now), someone would surely say "nah, we don't care about these charts and whitepapers, we know that the company who made them will agree with anything for money, so it's still a NO"

My hunch is that in fact they won't agree with just any idea. There is a limit to how extreme the idea can get, though probably the filter is indeed weak. Still, without this filter, people would propose even wilder ideas that maximize their own expected payoff at the expense of other players, so just the fact that it has to be signed off by an external party is still enough information for the powerful decision makers that they are willing to fund their services.

By caminante 2026-03-121:58 Nah. They're conflicted and goal seek backwards from your wacky vision.

Look at NEOM in Saudi.

McKinsey took 130M in a year to recommend a 500B investment in a 105 mile city in the desert. Sunk 50B and project was revised to take 50 years and 8 trillion.

It's impressive salesmanship how they were able to bilk such a large sum and support interim approvals for the regime to launder favors. I can see people wanting that "conflict."

The version I've heard is that you can pin the blame on the consultants if it goes wrong.

By aleph_minus_one 2026-03-121:29 This is also true.

By steve1977 2026-03-1121:10 In my experience, McKinsey often gets brought in from the very top - who should be able to push through more or less what they want. They just want a scapegoat in case things go wrong.

Maybe it was opened up so it could be used in recruiting?

McKinsey challenges graduates to use AI chatbot in recruitment overhaul: https://www.ft.com/content/de7855f0-f586-4708-a8ed-f0458eb25...

By j45 2026-03-1116:31 Using a 2 year old paradigm.

And require a chatbot to be used that can be easily gamed by asking a model of how best to navigate it lol.

Implementing the past of AI practices is requesting something that will be easily outdone.

is this the same at quantumblack? They at least give the impression their assets on Brix are somewhat up to date and uesable

By itsnotme12 2026-03-1120:59 QB is no more, leadership left, technical experts left. Just the brand stayed behind.

I am not sure what accounting or management consulting firms are doing in tech.

They look to package up something and sell it as long as they can.

AI solutions won't have enough of a shelf life, and the thought around AI is evolving too quickly.

Very happy to be wrong and learn from any information folks have otherwise.

The purpose of hiring them is to make them come to the conclusion you already have, so when it goes well you get the credit for doing it, or if it goes sideways you can pin the blame on them.

Or, alternatively, there are so many companies that are weak on tech they pay for someone else to guide them.

By frankfrank13 2026-03-1117:24 Yeah its more this, the companies who ask Mck's help in software tend to hire contractors or vend out software already.

By apercu 2026-03-1118:24 Most companies are not _just_ tech companies and don't have business analysts, consulting analysts, solutions consultants, software engineers and DBA's on staff.

Many, many, many companies are very happy with the consulting firms they hire.

Of course, those are the consulting firms that aren't publicly traded and in the news all the time (for all the wrong reasons).

> One of those unprotected endpoints wrote user search queries to the database. The values were safely parameterised, but the JSON keys — the field names — were concatenated directly into SQL.

I was expecting prompt injection, but in this case it was just good ol' fashioned SQL injection, possible only due to the naivety of the LLM which wrote McKinsey's AI platform.

Yeah, gotta admit I'm a bit disappointed here. This was a run-of-the-mill SQL injection, albeit one discovered by a vulnerability scanning LLM agent.

I thought we might finally have a high profile prompt injection attack against a name-brand company we could point people to.

By jfkimmes 2026-03-1114:58 Not the same league as McKinsey, but I like to point to this presentation to show the effects of a (vibe coded) prompt injection vulnerability:

https://media.ccc.de/v/39c3-skynet-starter-kit-from-embodied...

> [...] we also exploit the embodied AI agent in the robots, performing prompt injection and achieve root-level remote code execution.

Github actions has had a bunch of high-profile prompt injection attacks at this point, most recently the cline one: https://adnanthekhan.com/posts/clinejection/

I guess you could argue that github wasn't vulnerable in this case, but rather the author of the action, but it seems like it at least rhymes with what you're looking for.

By simonw 2026-03-1116:06 Yeah that was a good one. The exploit was still a proof of concept though, albeit one that made it into the wild.

> I thought we might finally have a high profile prompt injection attack against a name-brand company we could point people to.

These folks have found a bunch: https://www.promptarmor.com/resources

But I guess you mean one that has been exploited in the wild?

By simonw 2026-03-1116:15 Yeah I'm still optimistic that people will start taking this threat seriously once there's been a high profile exploit against a real target.

I just wonder how much professional grade code written by LLMs, "reviewed" by devs, and commited that made similar or worse mistakes. A funny consequence of the AI boom, especially in coding, is the eventual rise in need for security researchers.

In fairness although "the industry" learns best practices like using SQL prepared statements, not sanitising via blacklists, CSFR, etc. there's a constant new stream of new programmers who just never heard of these things. It doesn't help that often when these things are realised the only way we prevent it in future is by talking about it, which doesn't work for newbies. Nobody goes and fixes SQL APIs so that you can only pass compile-time constant strings as the statement or whatever. Newbies just have to magically know to do that.

By projektfu 2026-03-121:24 This was standard form for Embedded SQL, which the industry has forgotten while moving to dynamic apis since ODBC and JDBC got popular.

By doctorpangloss 2026-03-1115:53 The tacit knowledge to put oauth2-proxy in front of anything deployed on the Internet will nonetheless earn me $0 this year, while Anthropic will make billions.

By oliver_dr 2026-03-1114:47 [dead]

I don’t love the title here. Maybe this is a “me” problem, but when I see “AI agent does X,” the idea that it might be one of those molt-y agents with obfuscated ownership pops into my head.

In this case, a group of pentesters used an AI agent to select McKinsey and then used the AI agent to do the pentesting.

While it is conventional to attribute actions to inanimate objects (car hits pedestrians), IMO we should be more explicit these days, now that unfortunately some folks attribute agency to these agentic systems.

By simonw 2026-03-1114:45 Yeah, the original article title "How We Hacked McKinsey's AI Platform" is better.

> now that unfortunately some folks attribute agency to these agentic systems.

You're doing that by calling them "agentic systems".

Unfortunately that’s what they are called. I was hoping the phrasing would highlight the problem rather than propagate it.

Eh, if you tell me that I need to do X, then I can make choices on how to accomplish X, that I am no longer an agent as a human?

You're trying to redefine long standing definitions for God knows what reason.

By bee_rider 2026-03-1119:34 The difference is that you are a sentient person who decides to follow my instructions, not just a tool that I use.

Yah it's just an ad, and "Pentesting agents finds low-hanging vulnerability" isn't gonna drive clicks.

By dang 2026-03-1119:17 Ok, we've reverted the title (submitted title was "AI Agent Hacks McKinsey")