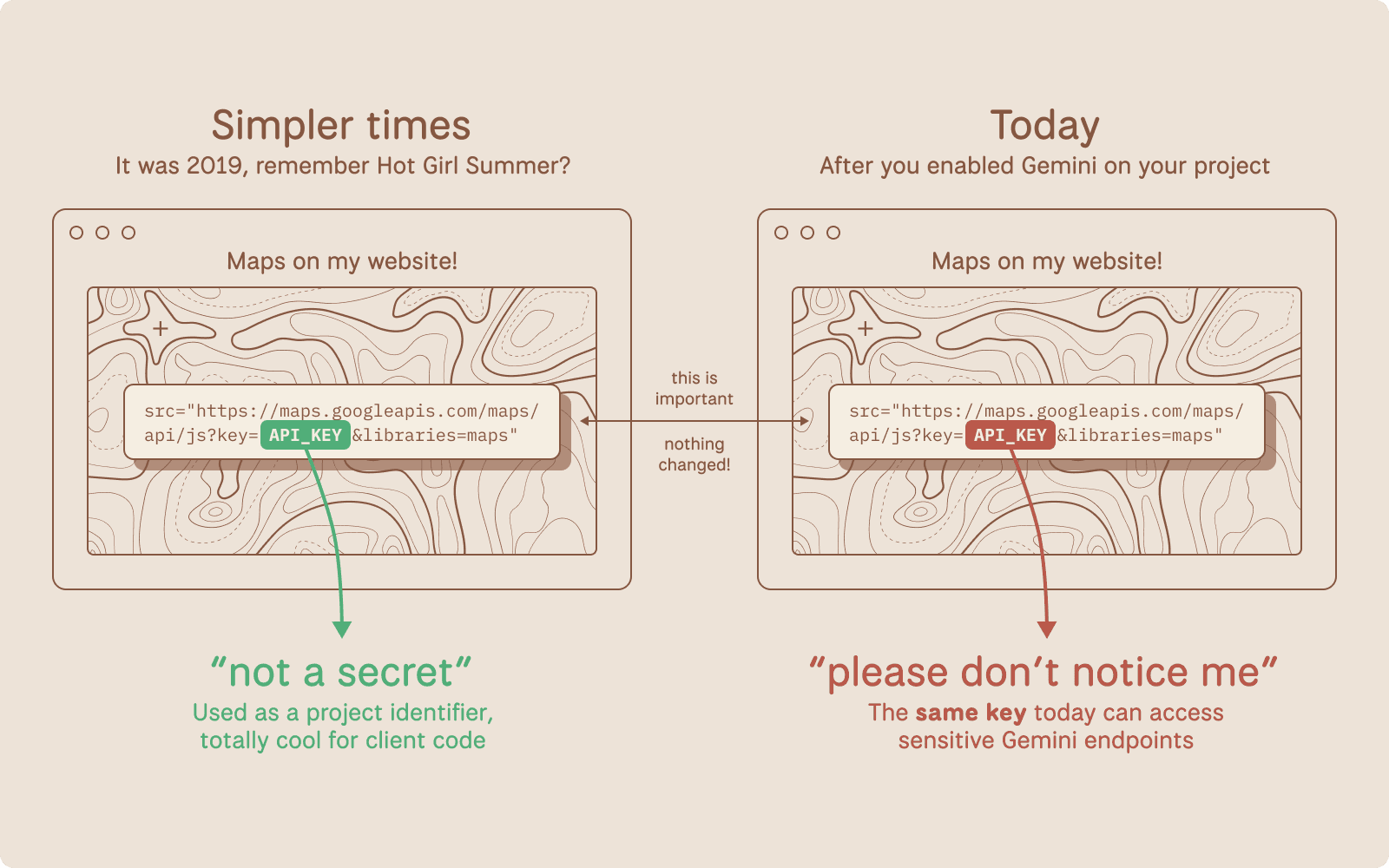

Google spent over a decade telling developers that Google API keys (like those used in Maps, Firebase, etc.) are not secrets. But that's no longer true.

tl;dr Google spent over a decade telling developers that Google API keys (like those used in Maps, Firebase, etc.) are not secrets. But that's no longer true: Gemini accepts the same keys to access your private data. We scanned millions of websites and found nearly 3,000 Google API keys, originally deployed for public services like Google Maps, that now also authenticate to Gemini even though they were never intended for it. With a valid key, an attacker can access uploaded files, cached data, and charge LLM-usage to your account. Even Google themselves had old public API keys, which they thought were non-sensitive, that we could use to access Google’s internal Gemini.

Google Cloud uses a single API key format (AIza...) for two fundamentally different purposes: public identification and sensitive authentication.

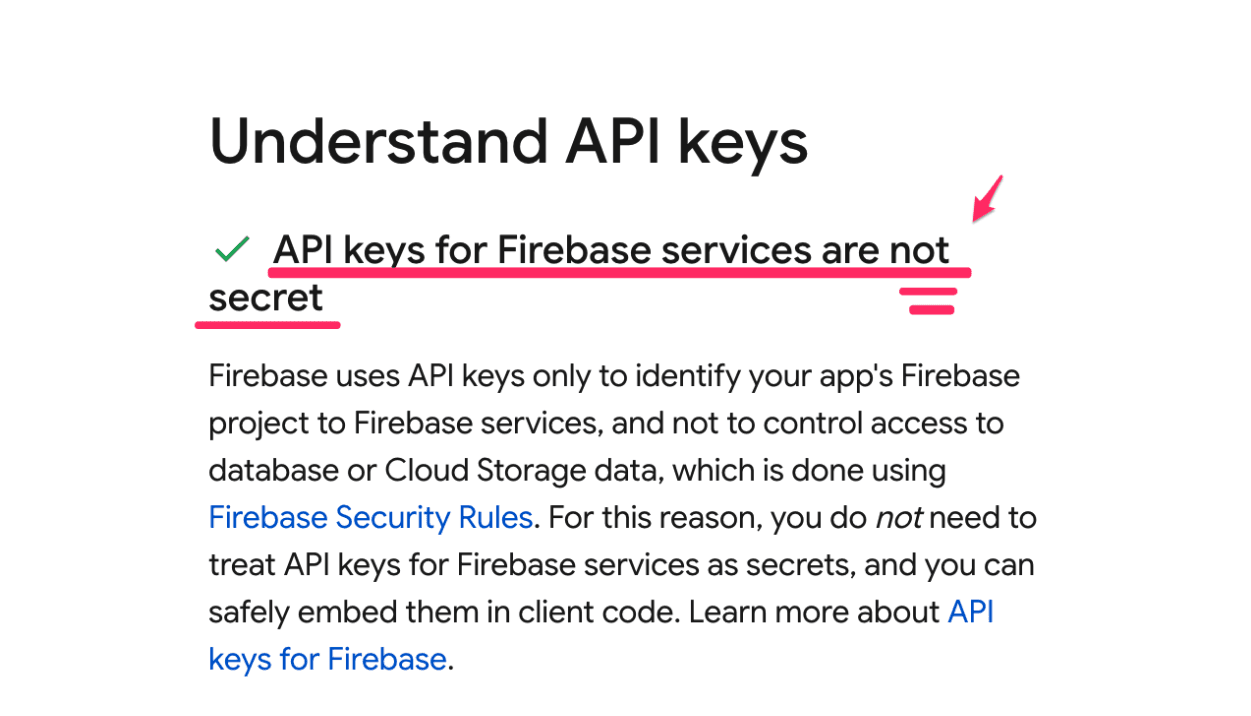

For years, Google has explicitly told developers that API keys are safe to embed in client-side code. Firebase's own security checklist states that API keys are not secrets.

Note: these are distinctly different from Service Account JSON keys used to power GCP.

Source: https://firebase.google.com/support/guides/security-checklist#api-keys-not-secret

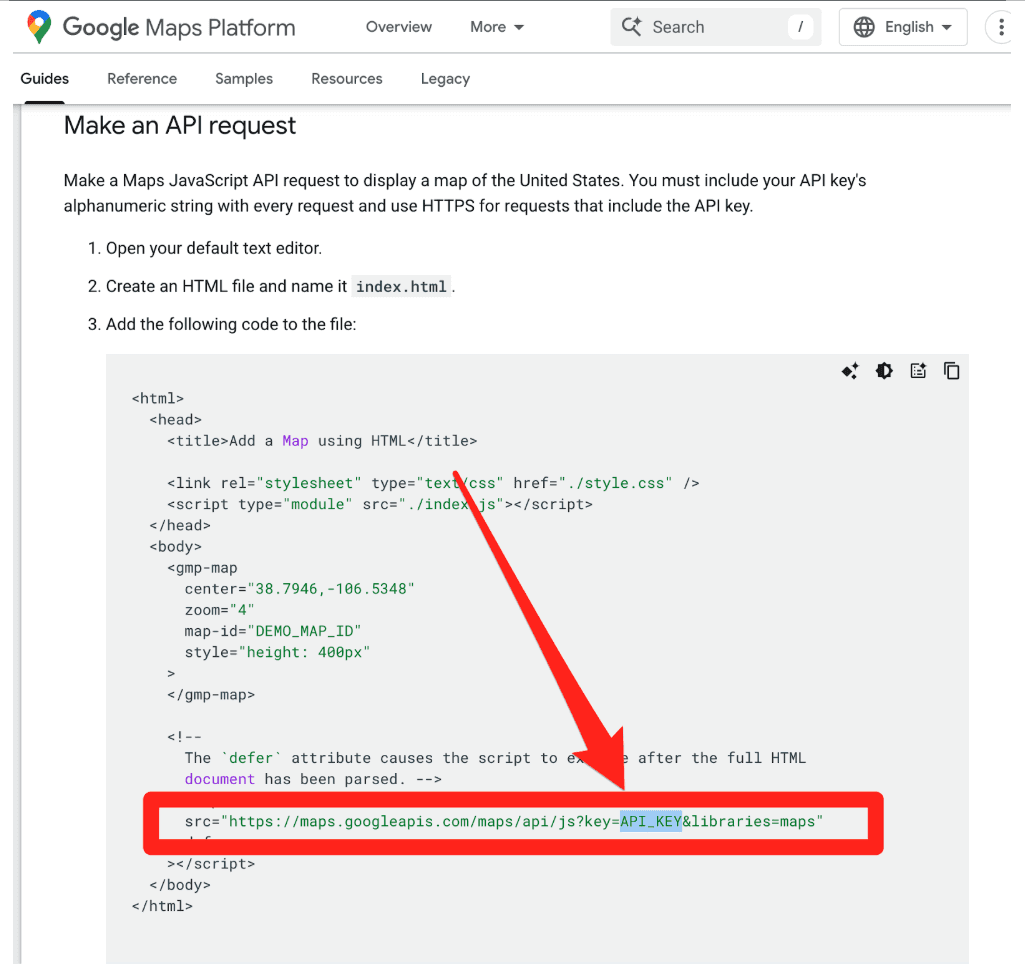

Google's Maps JavaScript documentation instructs developers to paste their key directly into HTML.

This makes sense. These keys were designed as project identifiers for billing, and can be further restricted with (bypassable) controls like HTTP referer allow-listing. They were not designed as authentication credentials.

Then Gemini arrived.

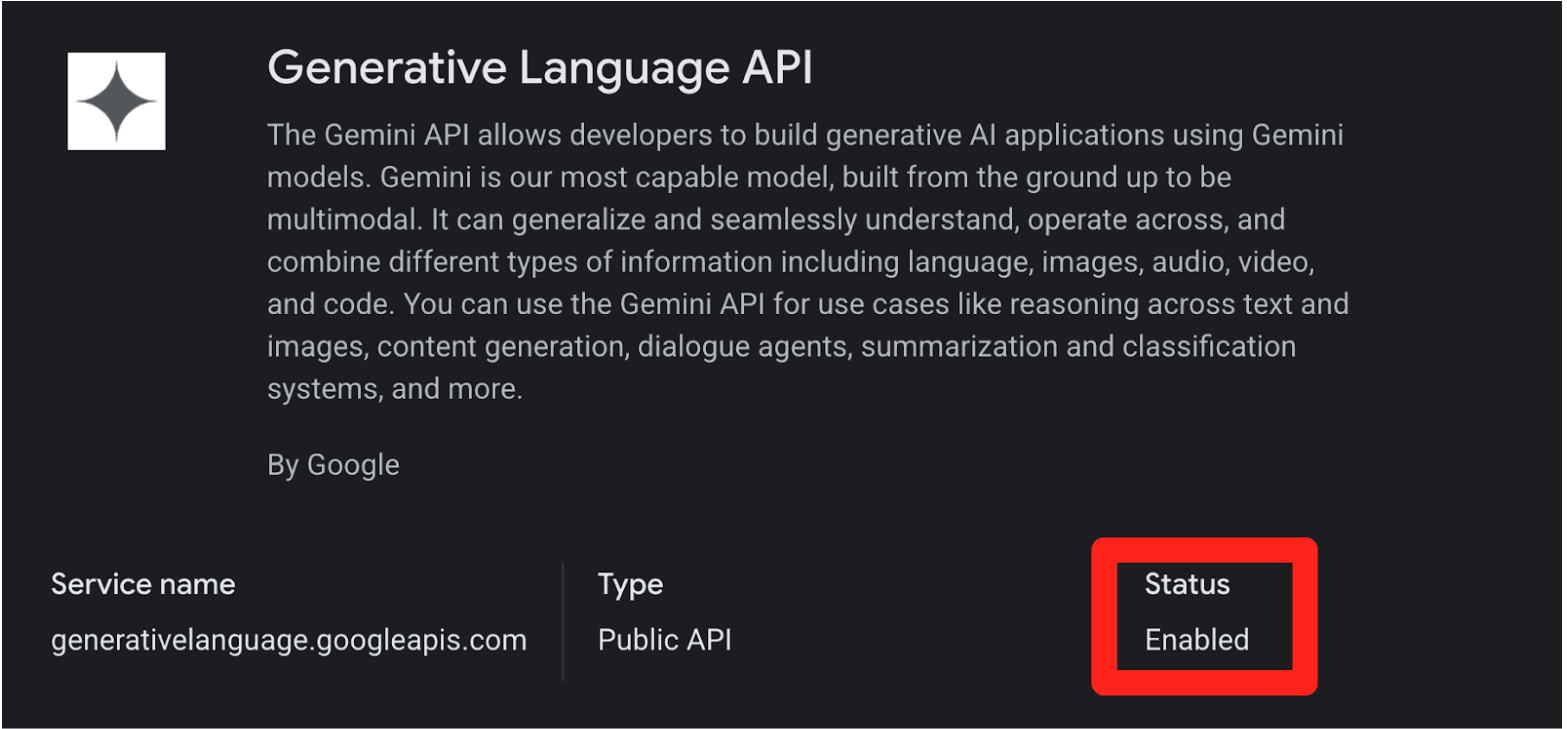

When you enable the Gemini API (Generative Language API) on a Google Cloud project, existing API keys in that project (including the ones sitting in public JavaScript on your website) can silently gain access to sensitive Gemini endpoints. No warning. No confirmation dialog. No email notification.

This creates two distinct problems:

Retroactive Privilege Expansion. You created a Maps key three years ago and embedded it in your website's source code, exactly as Google instructed. Last month, a developer on your team enabled the Gemini API for an internal prototype. Your public Maps key is now a Gemini credential. Anyone who scrapes it can access your uploaded files, cached content, and rack up your AI bill. Nobody told you.

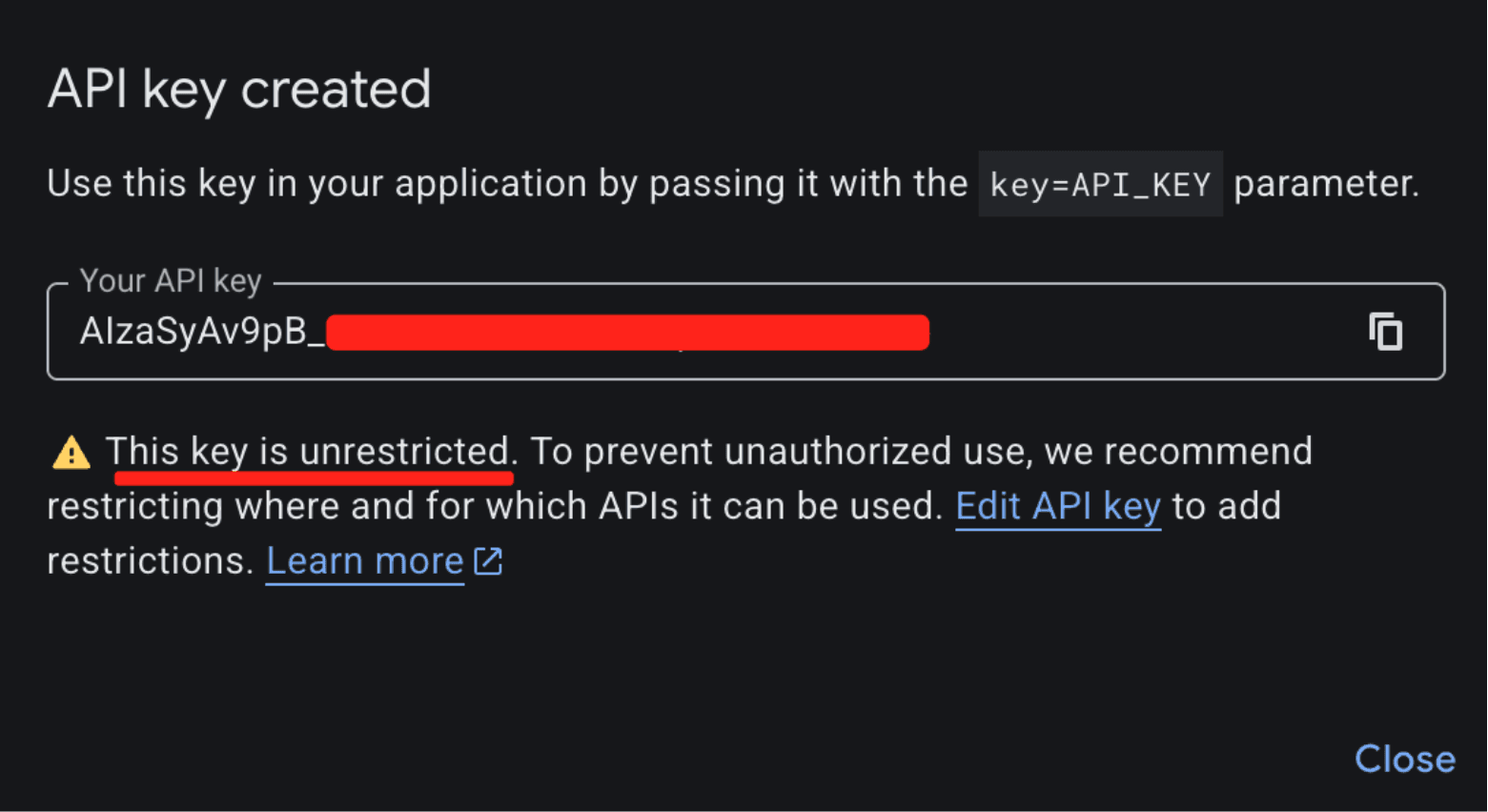

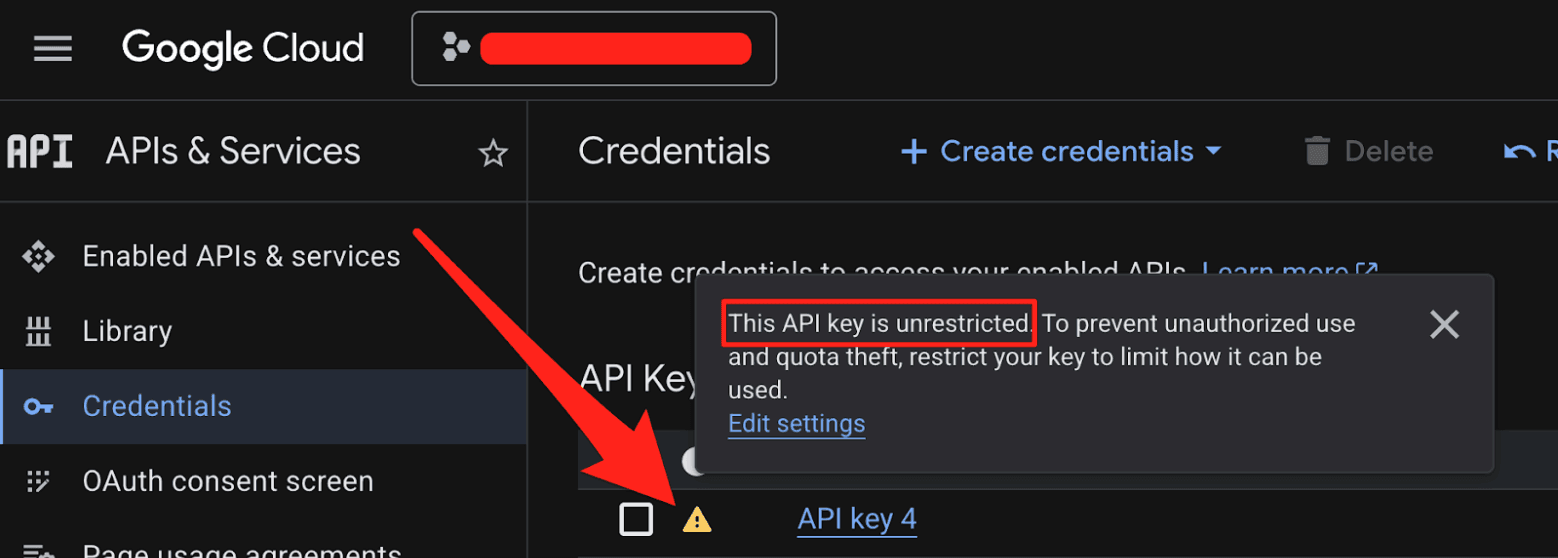

Insecure Defaults. When you create a new API key in Google Cloud, it defaults to "Unrestricted," meaning it's immediately valid for every enabled API in the project, including Gemini. The UI shows a warning about "unauthorized use," but the architectural default is wide open.

The result: thousands of API keys that were deployed as benign billing tokens are now live Gemini credentials sitting on the public internet.

What makes this a privilege escalation rather than a misconfiguration is the sequence of events.

A developer creates an API key and embeds it in a website for Maps. (At that point, the key is harmless.)

The Gemini API gets enabled on the same project. (Now that same key can access sensitive Gemini endpoints.)

The developer is never warned that the keys' privileges changed underneath it. (The key went from public identifier to secret credential).

While users can restrict Google API keys (by API service and application), the vulnerability lies in the Insecure Default posture (CWE-1188) and Incorrect Privilege Assignment (CWE-269):

Implicit Trust Upgrade: Google retroactively applied sensitive privileges to existing keys that were already rightfully deployed in public environments (e.g., JavaScript bundles).

Lack of Key Separation: Secure API design requires distinct keys for each environment (Publishable vs. Secret Keys). By relying on a single key format for both, the system invites compromise and confusion.

Failure of Safe Defaults: The default state of a generated key via the GCP API panel permits access to the sensitive Gemini API (assuming it’s enabled). A user creating a key for a map widget is unknowingly generating a credential capable of administrative actions.

What an Attacker Can Do

The attack is trivial. An attacker visits your website, views the page source, and copies your AIza... key from the Maps embed. Then they run:

curl "https://generativelanguage.googleapis.com/v1beta/files?key=$API_KEY"

Instead of a 403 Forbidden, they get a 200 OK. From here, the attacker can:

Access private data. The

/files/and/cachedContents/endpoints can contain uploaded datasets, documents, and cached context. Anything the project owner stored through the Gemini API is accessible.Run up your bill. Gemini API usage isn't free. Depending on the model and context window, a threat actor maxing out API calls could generate thousands of dollars in charges per day on a single victim account.

Exhaust your quotas. This could shut down your legitimate Gemini services entirely.

The attacker never touches your infrastructure. They just scrape a key from a public webpage.

2,863 Live Keys on the Public Internet

To understand the scale of this issue, we scanned the November 2025 Common Crawl dataset, a massive (~700 TiB) archive of publicly scraped webpages containing HTML, JavaScript, and CSS from across the internet. We identified 2,863 live Google API keys vulnerable to this privilege-escalation vector.

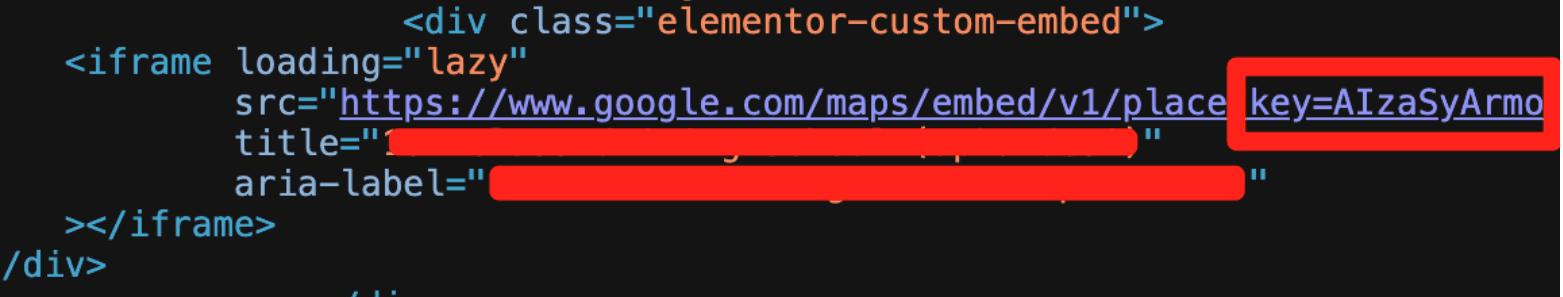

Example Google API key in front-end source code used for Google Maps, but also can access Gemini

These aren't just hobbyist side projects. The victims included major financial institutions, security companies, global recruiting firms, and, notably, Google itself. If the vendor's own engineering teams can't avoid this trap, expecting every developer to navigate it correctly is unrealistic.

Proof of Concept: Google's Own Keys

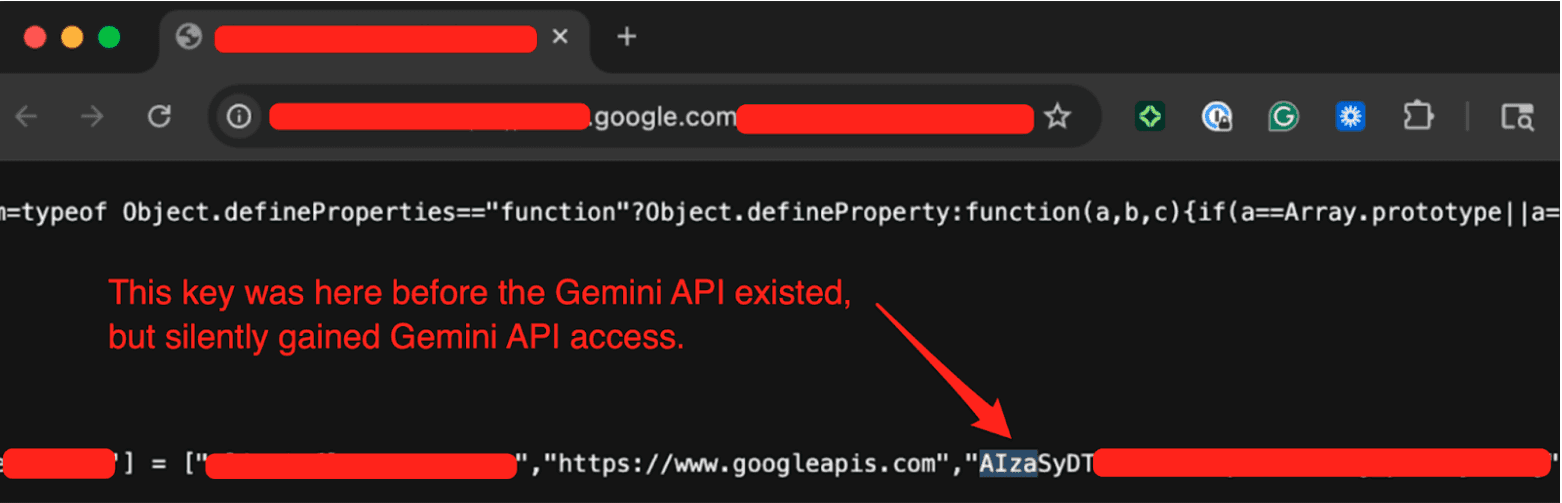

We provided Google with concrete examples from their own infrastructure to demonstrate the issue. One of the keys we tested was embedded in the page source of a Google product's public-facing website. By checking the Internet Archive, we confirmed this key had been publicly deployed since at least February 2023, well before the Gemini API existed. There was no client-side logic on the page attempting to access any Gen AI endpoints. It was used solely as a public project identifier, which is standard for Google services.

We tested the key by hitting the Gemini API's /models endpoint (which Google confirmed was in-scope) and got a 200 OK response listing available models. A key that was deployed years ago for a completely benign purpose had silently gained full access to a sensitive API without any developer intervention.

The Disclosure Timeline

We reported this to Google through their Vulnerability Disclosure Program on November 21, 2025.

Nov 21, 2025: We submitted the report to Google's VDP.

Nov 25, 2025: Google initially determined this behavior was intended. We pushed back.

Dec 1, 2025: After we provided examples from Google's own infrastructure (including keys on Google product websites), the issue gained traction internally.

Dec 2, 2025: Google reclassified the report from "Customer Issue" to "Bug," upgraded the severity, and confirmed the product team was evaluating a fix. They requested the full list of 2,863 exposed keys, which we provided.

Dec 12, 2025: Google shared their remediation plan. They confirmed an internal pipeline to discover leaked keys, began restricting exposed keys from accessing the Gemini API, and committed to addressing the root cause before our disclosure date.

Jan 13, 2026: Google classified the vulnerability as "Single-Service Privilege Escalation, READ" (Tier 1).

Feb 2, 2026: Google confirmed the team was still working on the root-cause fix.

Feb 19, 2026: 90 Day Disclosure Window End.

Credit Where It's Due

Transparently, the initial triage was frustrating; the report was dismissed as "Intended Behavior”. But after providing concrete evidence from Google's own infrastructure, the GCP VDP team took the issue seriously.

They expanded their leaked-credential detection pipeline to cover the keys we reported, thereby proactively protecting real Google customers from threat actors exploiting their Gemini API keys. They also committed to fixing the root cause, though we haven't seen a concrete outcome yet.

Building software at Google's scale is extraordinarily difficult, and the Gemini API inherited a key management architecture built for a different era. Google recognized the problem we reported and took meaningful steps. The open questions are whether Google will inform customers of the security risks associated with their existing keys and whether Gemini will eventually adopt a different authentication architecture.

Where Google Says They're Headed

Google publicly documented its roadmap. This is what it says:

Scoped defaults. New keys created through AI Studio will default to Gemini-only access, preventing unintended cross-service usage.

Leaked key blocking. They are defaulting to blocking API keys that are discovered as leaked and used with the Gemini API.

Proactive notification. They plan to communicate proactively when they identify leaked keys, prompting immediate action.

These are meaningful improvements, and some are clearly already underway. We'd love to see Google go further and retroactively audit existing impacted keys and notify project owners who may be unknowingly exposed, but honestly, that is a monumental task.

What You Should Do Right Now

If you use Google Cloud (or any of its services like Maps, Firebase, YouTube, etc), the first thing to do is figure out whether you're exposed. Here's how.

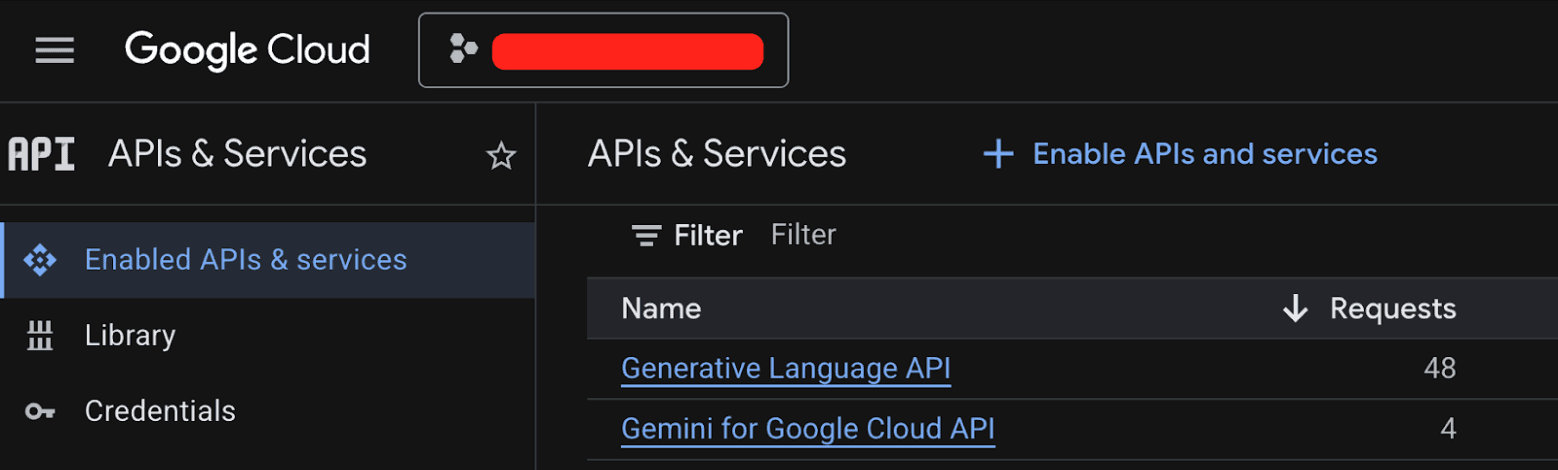

Step 1: Check every GCP project for the Generative Language API.

Go to the GCP console, navigate to APIs & Services > Enabled APIs & Services, and look for the "Generative Language API." Do this for every project in your organization. If it's not enabled, you're not affected by this specific issue.

Step 2: If the Generative Language API is enabled, audit your API keys.

Navigate to APIs & Services > Credentials. Check each API key's configuration. You're looking for two types of keys:

Keys that have a warning icon, meaning they are set to unrestricted

Keys that explicitly list the Generative Language API in their allowed services

Either configuration allows the key to access Gemini.

Step 3: Verify none of those keys are public.

This is the critical step. If a key with Gemini access is embedded in client-side JavaScript, checked into a public repository, or otherwise exposed on the internet, you have a problem. Start with your oldest keys first. Those are the most likely to have been deployed publicly under the old guidance that API keys are safe to share, and then retroactively gained Gemini privileges when someone on your team enabled the API.

If you find an exposed key, rotate it.

Bonus: Scan with TruffleHog.

You can also use TruffleHog to scan your code, CI/CD pipelines, and web assets for leaked Google API keys. TruffleHog will verify whether discovered keys are live and have Gemini access, so you'll know exactly which keys are exposed and active, not just which ones match a regular expression.

trufflehog filesystem /path/to/your/code --only-verifiedThe pattern we uncovered here (public identifiers quietly gaining sensitive privileges) isn't unique to Google. As more organizations bolt AI capabilities onto existing platforms, the attack surface for legacy credentials expands in ways nobody anticipated.

Additional Resources

Webinar: Google API Keys Weren't Secrets. But then Gemini Changed the Rules.

Read the original article

Comments

In Google AI Studio, Google documentation encourages to deploy vibecoded apps with an open proxy that allow equivalent AI billing abuse - giving the impression that the API key were secure because it is behind a proxy. Even an app with 0 AI features exposes dollars-per-query video models unless the key is manually scoped. Vulnerable apps (all apps deployed from AI Studio) are easily found by searching Google, Twitter or Hacker News. https://github.com/qudent/qudent.github.io/blob/master/_post...

I think the fact that it is not possible to put hard spending caps on API keys might be ruled illegal by some EU court soon enough, at least when they sell to consumers (given the explosion of vibecoding end-users making some apps). When I use OpenAI, Openrouter etc., I can put 10 $ on my API key, and when the key leaks, someone can use these 10 $ and that's it. With Google, there is no way to do that - there are extremely complicated "billing alerts" https://firebase.google.com/docs/projects/billing/advanced-b... , but these are time-delayed e-mails and there is no out of the box way to do the straightforward thing, which is to actually turn off the tap automatically once a budget is spent. The only native way to set a limit enforced immediately is by rate limiting - but I didn't see params which made it safe while usable in my case.

(a legal angle might be the Unfair Contract Terms Directive in the EU, though plenty of individual countries have their own laws that may apply to my understanding. A quite equivalent situation were the "bill shock" situations for mobile phone users, where people went on vacation and arrived home to an outrageously high roaming bill that they didn't understand they incurred. This is also limited today in the EU; by law, the service must be stopped after a certain charge is incurred)

> When I use OpenAI, Openrouter etc., I can put 10 $ on my API key, and when the key leaks, someone can use these 10 $ and that's it.

On that note, I'll just mention that I had discovered over the last while that when you prepay $10 into your Anthropic account, either directly, or via the newer "Extra usage" in subscription plans, and then use Claude Code, they will repeatedly overbill you, putting you into a negative balance. I actually complained and they told me that they allow the "final query" to complete rather than cutting it off mid-process, which is of course silly, because Claude Code is typically used for long sessions, where the benefit of being cut off 52% into the task rather than 51% into it is essentially meaningless.

I ended up paying for these so far, but would hope that someone with more free time sues them on it.

I'm spitballing here, but I suspect that (same with AWS) google uses post processing for billing, they run a job that scrapes the states THEN bills you for that. instead of the major AI companies are checking billing every API request coming in.

Yes, you are on the money. A cloud service provider needs to maintain reliability first and foremost, which means they won't have a runtime dependency on their billing system.

This means that billing happens asynchronously. You may use queues, you may do batching, etc. But you won't have a realtime view of the costs

>they won't have a runtime dependency on their billing system

Well, that makes sense in principle, but they obviously do have some billing check that prevents me from making additional requests after that "final query". And they definitely have some check to prevent me from overutilizing my quota when I have an active monthly subscription. So whatever it is that they need to do, when I prepay $x, I'm not ok with them charging me more than that (or I would have prepaid more). It's up to them to figure this out and/or absorb the costs.

By coryrc 2026-02-2619:17 > they obviously do have some billing check that prevents me from making additional requests after that "final query"

No they don't actually! They try to get close, but it's not guaranteed (for example, make that "final query" to two different regions concurrently).

Now, they could stand up a separate system with a guaranteed fixed cost, but few people want that and the cost would be higher, so it wouldn't make the money back.

You can do it on your end though: run every request sequentially through a service and track your own usage, stopping when reaching your limit.

By lelandbatey 2026-02-2620:521 reply They do have a billing check, but that check is looking at "eventually consistent" billing data which could have arbitrary delays or be checked out-of-order compared to how it occurred IRL. This is a strategy that's typically fine when the margin of over-billing is small, maybe 1% or less. I take it from your description that the actual over-billing is more like dozens of dollars, potentially more than single-digit percentages on top of the subscription price. Here's hoping they tighten up metering <> billing.

By messe 2026-02-2621:32 Then the right thing to do from a consumer standpoint is to factor that overbilling into their upfront pricing, rather than surprising people with bills that they were led not to expect.

I don't know if its still like this but around 1 year ago I set a spending limit for an OpenAI api key but it turns out its not a true limit. I spent 80$ on a 20$ limited key in the matter of minutes due to some bad code I wrote causing a looped loop.

I still had to pay it or else I wouldn't have been able to use my account.

By lachiflippi 2026-02-2712:48 OpenAI also does a really fun thing where prepaid credits just straight up expire after a year, which is straight up completely illegal in most (all?) of the EU.

By messe 2026-02-2621:24 > or else I wouldn't have been able to use my account.

Would that have been so bad? The world might be a better place if people stopping pouring money into that cesspit.

By continue to use their services, you're encouraging the anti-consumer tactics you're complaining about.

By jorl17 2026-02-272:23 It is still the case.

In fact, OpenAI's "billing", "usage tracking" and "billing/spending alerts" UX all have terrible UX. They look like completely independent features.

For example, you can set alert on how much you've spent in a month, but not on how much you have left in your credit bank. So you never really know how much you can still spend unless you go check their slow and confusing UI. You can set it to auto-refill your credits and to limit that to some amount per month (I think?), but again the alerts for this are absolutely atrocious or entirely missing.

Another insane thing I've seen with OpenAI is that, for some reason, your account can be thousands in the red, and some prompts, with some models, or some feature set, still go through. I haven't been able to figure out what heuristic or rule they are using to determine when they let your request through and overbill you, or when they just deny it altogether. Maybe they let all text requests through? Or perhaps it just lets websearch requests through and denies anything else? Maybe it profiles your your most common request and lets those go through? Maybe it had something to do with specific endpoints and APIs? Who knows.

We've moved entire projects off of them in part due to these issues. We got tired of constantly being in the red without a proper notification system (actually: with an insufficient, deceitful system), or of having seemingly random drops in requests only to find out suddenly that combination of parameters got blocked. Please, just completely block me and make me pay. Or give me a better alerts system. We have the money. What we haven't got is the patience to deal with such an obtuse system

let's hope it happens soon, I'm pretty sick of this reality where companies get to charge you whatever they want and it's designed to always be your fault

By AnthonyMouse 2026-02-2615:023 reply You're configuring something that costs money (electricity, hardware, real estate) to provide. Either it's "pay as you go" or you have a flat rate and a cap.

If you have a cap and then your thing hits the front page and suddenly has 10000% more legitimate traffic than usual, and you want the legitimate traffic, they're going to get an error page instead of what you want. If there is no cap, you're going to get a large bill. People hate both of those things and will complain regardless of which one actually happens.

The main thing Google is screwing up here is not giving you the choice between them.

By StopDisinfo910 2026-02-2617:301 reply The main thing Google is screwing up is that if my API key somehow leaks and I end up with extremely out of line billing at Microsoft, I will be on the phone with a customer representative as soon as we or they notice something weird happening and a solution will be found.

Google will probably have me go through five bots and if, by some kind of miracle, I manage to have a human on the phone, they will probably explain to me that I should have read the third paragraph of the fourth page of the self service doc and it's obviously my fault.

By disgruntledphd2 2026-02-2714:50 It took me approximately 6 months to get a billing dispute resolved with Google. Somehow my maps key got leaked, and someone ran up 1.8k in charges on it.

Super, super painful. That being said, I'm still using Google for geocoding (mostly batch) because their service works better for my data.

Imagine the outrage here, when a company credit card expires and the cloud provider terminates all their instances, deletes all your storage and blob backups?

By nitwit005 2026-02-2619:10 That does happen, it's just usually not when the card expires, but when the follow up billing emails get ignored for some period.

This is one of the reasons people have suggested using a different provider for backups.

it's not an either or, they can easily let me configure any kind of behavior that I want. No cap, a hard cap, a soft cap, a cap that I program with a python script, a cap where I throttle, a cap where I opt in to deleting certain machines to save money. It can all be done. People are complaining because obvious features are not provided. People would not be complaining if they had all the options that we needed to control how to scale resources in response to load, not just technical load but also financial load.

By AnthonyMouse 2026-02-2616:222 reply You can already do any of those things in your own code when making the API requests. The issue here is, if you unintentionally try to make a billion expensive requests or allow someone else to do it against your account, do you want them to automatically turn off your stuff or do you want the bill that comes if they don't?

By DoctorOetker 2026-02-276:451 reply You seem to not comprehend the concept of informed choice.

Upstream in the comments someone said they expect the EU might soon rule this type of billing illegal. That doesn't mean it becomes illegal, it just means yet another reaffirmation or reminder that - yes - this is indeed illegal.

You said that no fixed response -whether that is allow unexpected billing to increase without limit upon a surge vs serving error pages- will be accepted by the clientele, because some want it one way and others want it the other way.

Why would you force a single shoe size onto a population? Give them the choice. Whenever freedom of choice is violated in the name of market freedom, it is nearly always a violation of law, it's just a matter of hoping one lives in a jurisdiction that upholds its laws

> The issue here is, if you unintentionally try to make a billion expensive requests or allow someone else to do it against your account, do you want them to automatically turn off your stuff or do you want the bill that comes if they don't?

That is precisely the choice people are asking for! And it doesn't have to be just those 2 options: let the user define their own trigger formulas for different levels of increase: a small one might result in a notification delayed until certain working hours on weekdays and log each visitors reported origin (referer header), a slightly larger one might result in a notification during awake hours regardless of weekday or workday, yet a further larger consumption increase may trigger an unconditional notification, yet a further one might trigger an unconditional notification that requires a timely confirmation by the user/organization, in the absence of which a soft measure could be taken like adding a small header to the page being served notifying visitors that while still functional a hug of death may be in progress, and asking the visitors to paste the URL of the page from where they clicked the link to your site (to make sure that a full URL can be consulted in case the host operators are unable to find the hyperlink that led to their site from merely the origin domain), yet another increase in traffic may be chosen to result in specifically rate limiting users from the originator domains that caused the peak, so that your regular visitors from the past can still make normal use of the page, and so on.

Do freedom, choice, informed choice, preparedness mean something to you?

We could have an open standard configuration textual machine readable file format for these choices and settings, so that people can share their settings, and the machine readable format could have <private> tags to wrap around phone numbers etc to notify, so that people can easily run a command line program or script that censors those exact values and replaces the first phone number like "<private><phone>(+32)474123456</phone></private>" with "<private><phone>generic phone number 1</phone></private>" and the second email address in the file like "<private><email>john.smith@nonprofit.org</email></private>" is replaced with "<private><email>generic.email@address.2</email></private>", so that people can easily export and share such files, possibly hosting it like robots.txt but say billing_policy.txt so people can inspect how others handle these situations so that popular consensus policies can form.

Hosting, compute etc. services that allow users to configure such files and have them be executed by the hosting service will be more attractive than those which don't.

By AnthonyMouse 2026-02-2715:37 > You said that no fixed response -whether that is allow unexpected billing to increase without limit upon a surge vs serving error pages- will be accepted by the clientele, because some want it one way and others want it the other way.

No, it's because people dislike both of those things and don't want either one of them, and frequently fail to realize ahead of time that choosing between them is even necessary and then get upset by whichever one actually happens.

> Why would you force a single shoe size onto a population?

Here's my original post:

> The main thing Google is screwing up here is not giving you the choice between them.

> And it doesn't have to be just those 2 options

We're talking about an API used by programmers. You don't need them to give you any of that, all you need is for the API to tell you what your current usage is -- and even that is only necessary if something other than your own code is racking up usage. When you're the one making queries and the price of each one is known ahead of time or available via the API, you can already implement any of that logic yourself.

By mindslight 2026-02-2616:42 You're oversimplifying the problem in the other direction. Fine-grained scriptability of hard limits would bump up against all of the thorny distributed systems problems. But I do agree that fixing the simple cases is straightforward - maximum spend rates per instant and per unit of time (eg per minute, hour, day, month). Providers would shoulder the small costs from the slightly-leaky assumptions they have to make to implement those limits, and users can then operate within that framework to optimize what they want on a best-effort basis (eg a script that responds within a minute to explicitly scale resources, or a human-in-the-loop notification cycle over the course of hours so that you have the possibility to say "actually this is popularity traffic that I really do want to pay for, etc).

By Eddy_Viscosity2 2026-02-2612:333 reply > I'm pretty sick of this reality where companies get to charge you whatever they want and it's designed to always be your fault

But have you considered it from the companies POV? Charging whatever you like and its always the customers fault is a pretty sweet deal. Up next in the innovation pipeline is charging customers extra fees for something or other. It'll be great!

By shimman 2026-02-2614:52 Why should I care about the companies POV? The company always wants to rat fuck everyone to make money. The company should be legally compelled to care about the customer because that's the only way these things change.

By SoftTalker 2026-02-2619:461 reply This is just the utility model. It's nothing particularly nefarious. Consider what your electric utility, your water utility, etc. do. If you use more, you pay more. If someone comes around and hooks up a garden hose to your outside faucet and steals your water, or plugs an extension cord into your outside outlet and steals your electricity, you still pay. Unless you can catch the thief and make him pay.

By cool_dude85 2026-02-2620:29 Funny enough, the utility business broadly wants to move away from this model to more of a cap-based prepaid model. Where I live, to get on the standard payment system may require a quite hefty deposit up front, but the prepaid payment option does not. I get the impression that, if not for customer sentiment and inertia, this would be the default option.

By cwmoore 2026-02-2614:16 Healthy, even.

By opsmeter 2026-03-0120:04 [dead]

By dathinab 2026-02-2612:50 I think the term you are looking for is "negligence".

But not in the causal sense of the word but in the legal "the company didn't folly the legal required base line of acting with due diligence".

In general companies are required to act with diligence, this is also e.g. where punitive damages come in to produce a insensitive to companies to act with diligence or they might need to pay far above the actual damages done.

This is also why in some countries for negligence the executives related to the negligent decisions up to the CEO can be hold _personally_ liable. (Through mostly wrt. cases of negligence where people got physically harmed/died; And mostly as an alternative approach to keeping companies diligent, i.e. instead of punitive damages.).

The main problem is that in many cases companies do wriggle their way out of it with a mixture of "make pretend" diligence, lawyer nonsense dragging thing out and early settlements.

By chrisjj 2026-02-2612:55 Upvoted.

Not illegal, but it should make enforcing payment illegal.

By gmerc 2026-02-2610:20 Not illegal enough to worry about. nothing a peace board donation can’t fix.

By RobotToaster 2026-02-2610:201 reply Sure, after 6 years in court you may get a settlement, 95% of which will go towards paying your legal fees.

> 95% of which will go towards paying your legal fees

laughs in European

I laughed. No in europe when you win a case like this the judge usually forces the losing party to pay the legal expenses of the winner. Especially if the losing party is a big corporation.

By staticman2 2026-02-2613:061 reply It is not. Legal fees are rarely awarded in the U.S.

I should have said if you recover it in your damages, which every competent attorney will push for.

By staticman2 2026-02-2613:412 reply Legal fees are not something you are usually legally entitled to.

Your attorney can push for whatever illegal thing they can think of, it doesn't mean you will get it.

> Your attorney can push for whatever illegal thing they can think of, it doesn't mean you will get it.

It is not illegal to include legal fees in damages.

By staticman2 2026-02-2713:19 By illegal I mean contrary to American law.

Legal fees are literally not damages. A court granting legal fees would be doing that in addition to damages.

In most cases the jury will never even be told what your attorneys fees are, and they are not permitted to award them:

https://en.wikipedia.org/wiki/American_rule_(attorney%27s_fe...

By patmorgan23 2026-02-2615:33 Requesting and being granted legal fees are two different things.

The default "American rule" is that each party pays their own legal fees, unless there is a relevant fee shifting rule.

By staticman2 2026-02-2713:16 > Under what statute is it illegal to request legal fees?

You can request anything you want? Granting it would be illegal.

An attorney asking the judge to break the law and award attorney fees is literally asking for something illegal in most circumstances. There are exceptions. (By illegal I mean contrary to law.)

It's funny that 4 people downvoted me instead of bothering to check Wikipedia.

https://en.wikipedia.org/wiki/American_rule_(attorney%27s_fe...

By Dylan16807 2026-02-2621:01 > Downvoted for asking an honest question.

If you put in "surely" and people think it's quite wrong then they might downvote. It's not personal.

It’s possibly civil, but I don’t see how this type of negligence would be breaking a law. If it was illegal, a massive number of independent consultants would be serving prison sentences. I’m not sure how that makes anything better though I guess a lot of people think rage is fun.

By marcosdumay 2026-02-2614:50 Civil law is law, and breaking it is illegal. You seem to be misunderstanding it.

How dare you question a corporation's ability to make unlimited money?

By blinding-streak 2026-02-2613:01 Will someone think of the shareholders? /s

> Leaked key blocking. They are defaulting to blocking API keys that are discovered as leaked and used with the Gemini API.

There are no "leaked" keys if google hasn't been calling them a secret.

They should ideally prevent all keys created before Gemini from accessing Gemini. It would be funny(though not surprising) if their leaked key "discovery" has false positives and starts blocking keys from Gemini.

Yeah its tremendously unclear how they can even recover from this. I think the most selective would be: they have to at minimum remove the Generative Language API grant from every API key that was created before it was released. But even that isn't a full fix, because there's definitely keys that were created after that API was released which accidentally got it. They might have to just blanket remove the Generative Language API grant from every API key ever issued.

This is going to break so many applications. No wonder they don't want to admit this is a problem. This is, like, whole-number percentage of Gemini traffic, level of fuck-up.

Jesus, and the keys leak cached context and Gemini uploads. This might be the worst security vulnerability Google has ever pushed to prod.

By decimalenough 2026-02-265:364 reply The Gemini API is not enabled by default, it has to be explicitly enabled for each project.

The problem here is that people create an API key for use X, then enable Gemini on the same project to do something else, not realizing that the old key now allows access to Gemini as well.

Takeaway: GCP projects are free and provide strong security boundaries, so use them liberally and never reuse them for anything public-facing.

Imagine enabling Maps, deploying it on your website, and then enabling Google Drive API and that key immediately providing the ability to store or read files. It didn't work like that for any other service, why should it work that way for Gemini.

Also, for APIs with quotas you have to be careful not to use multiple GCP projects for a single logical application, since those quotas are tracked per application, not per account. It is definitely not Google's intent that you should have one GCP project per service within a single logical application.

Really? I make multiple GCP projects per app. One project for the (eg) Maps API, one for Drive, one for Mail, one for $THING. Internal corp-services might have one project with a few APIs enabled - but for the client-app that we sell, there are many projects with one or two APIs enabled only.

If you ever have to enable public OAuth on such a project, you'll need to provide a list of all the API projects in use with the application, and Google Trust and Safety will pressure you to merge them together into a single GCP project. I've been through it.

You can do what you're describing but it's not the model Google is expecting you to use, and you shouldn't have to do that.

It seems what happened here is that some extremely overzealous PM, probably fueled by Google's insane push to maximize Gemini's usage, decided that the Gemini API on GCP should be default enabled to make it easier for people to deploy, either being unaware or intentionally overlooking the obvious security implications of doing so. It's a huge mistake.

> decided that the Gemini API on GCP should be default enabled to make it easier for people to deploy

Like deciding ATM cabinets should be default open to make it easier for people to withdraw cash.

No, there must be more behind this than overzealotry.

By chii 2026-02-273:52 On the other hand, i would not attribute to malice what could be reasonably attributed to stupidity.

By busko 2026-02-272:52 Why would they encourage more resource use, increasing their cost?

Gemini should have had it's own API key separate from their traditionally public facing API IDs (which they call keys) and API keys should default to being tightly scoped to their use case rather than being unrestricted.

Who cares if you have three API keys for three services.

Quite frankly putting any API information in things like url params or client side code just doesn't sit right with me. It breaks the norm in a way that could be, and is now security concern.

By chrisjj 2026-02-2613:03 > It didn't work like that for any other service, why should it work that way for Gemini.

Artifical Intelligence service design and lack of human intelligence are highly correlated. Who'd have guessed??

By refulgentis 2026-02-265:583 reply I’m usually client side dev, and am an ex googler and very curious how this happened.

I can somewhat follow this line of thinking, it’s pretty intentional and clear what you’re doing when you flip on APIs in the Google cloud site.

But I can’t wrap my mind around what is an API key. All the Google cloud stuff I’ve done the last couple years involves a lot of security stuff and permissions (namely, using Gemini, of all things. The irony…).

Somewhat infamously, there’s a separate Gemini API specifically to get the easy API key based experience. I don’t understand how the concept of an easy API key leaked into Google Cloud, especially if it is coupled to Gemini access. Why not use that to make the easy dev experience? This must be some sort of overlooked fuckup. You’d either ship this and API keys for Gemini, or neither. Doing it and not using it for an easier dev experience is a head scratcher.

By StilesCrisis 2026-02-2612:46 They started off behind, and have been scrambling to catch up. This means they didn't get the extra year of design-doc hell before shipping, so mistakes were made.

By liveoneggs 2026-02-2622:09 they auto-create projects and api keys: gen-lang-client-12345

app-scripts creates projects as well but maps just generates api keys in the current project

--- Get Started on Google Maps Platform You're all set to develop! Here's the API key you would need for your implementation. API key can be referenced in the Credentials section.

By tempest_ 2026-02-2615:53 I was trying to test the gemini-cli using code assist standard.

To this day I am unable to access the models they say I should be able to.

I still get 2.5 only, despite enabling previews in the google cloud config etc etc.

The access seems to randomly turn on and off and swaps depending on the auth used (Oauth, api-key, etc)

The entire gemini-cli repo looks like it is full of slop with 1000 devs trying to be the first to pump every issue into claude and claim some sort of clout.

It is an absolute shit show and not a good a look.

By franga2000 2026-02-267:431 reply Isn't there a limit to the number of projects you can make and then you have to ask support to increase it?

There is, yes. The rumor mill suggests that the default limit is 30.

At $DAYJOB, we had a (not very special) special arrangement with GCP, and I never heard of anyone who was unable to create a project in our company's orgs [0].

Given how Google never, ever wants to have a human do customer support, I expect a robot will quickly auto-approve requests for "number of projects" quota increases. I know that's how it worked at work.

[0] ...with the exception of errors caused by GCP flakiness and other malfunction, of course.

By kl4m 2026-02-2612:01 Many products using the Cloud APIs auto-create projects. I know of AI Studio and Google Script (including scripts embedded in Docs, Sheets, etc)

So many organizations have the IAM "Project creator" role assigned to everyone at the org level. I think it's even a default.

By sadeshmukh 2026-02-2616:28 Can vouch, I put in a request for 20 projects extra which was approved in hours.

By tisdadd 2026-02-2617:07 As long as you are over a certain spend. I started something for my own project and went to apply the recommended architecture, which does not work without a quota increase. As it was from a fresh account, the email was we won't look at this until you spend or pre spend so much money. Frankly, for a trail period when evaluating at prior enterprises, that would have made me just say no to their cloud. One expects that the recommended architecture can be deployed in the trial run without hoops.

By liveoneggs 2026-02-2622:06 I was exploring this today and just clicked on the "maps" Platform or APIs & Services to just explore and it immediately popped up a screen with "This is your API key for maps to start using!" without my input.

It sent me to a url: https://console.cloud.google.com/google/maps-apis/onboard;fl...

which auto-generated an API key for me to paste into things ASAP.

---

Get Started on Google Maps Platform You're all set to develop! Here's the API key you would need for your implementation. API key can be referenced in the Credentials section.

Everytime someone proposes protobuf as an rpc format, I respond “Hell no! There’s no support for protocol versioning.”

Of course, I bring this up because they could just version their API keys, completely solving this problem and preventing future ones like it.

Versioning data formats is wrongthink over there, so I’m guessing they just… won’t.

By hedora 2026-02-2722:08 Yep: JSON schema Alternatively, with typescript you can write:

export type FooRpcV1 : { version: 1, ... } export type FooRpcV2 : { version: 2, ... }

in zod syntax, and it'll do the right thing statically and at runtime (ask an LLM for help with the syntax).

With protobufs (specifically protoc), you get some type like:

export type FooRpc : { version : 1 | 2, v1fieldA? : string, v1fieldB? : int, v2fieldB? : string, v2fieldB? string };

which is 2^5 message types, even if all fields of both versions were mandatory. Then application logic needs to validate it.

I started replying with a clever approach to layer scopes onto keys… but nope. Doesn’t work.

How did this get past any kind of security review at all? It’s like using usernames as passwords.

By Ekaros 2026-02-268:53 Maliciously thinking allowing this increase billable. Thus it increases the bottom line and make stock go up... Which is good for vesting...

Sheesh. We're in a world where a global Big Tech security team lacks comptetance to run even one high-street locksmith.

I hope Google has a database with the creation timestamp for every API key they issued.

By 827a 2026-02-2614:56 You can see the creation date even on the GCloud dashboard. But this information isn't helpful in recovering from this issue, if they're interested in recovering correctly, because there's no guarantee that even keys created before the launch of Gemini didn't have Gemini access added to the keys intentionally. There are also likely public keys created after the launch of Gemini that also erroneously received the Gemini grant. The key creation date is ultimately useless; what it comes down to is whether the key's usage is intentional or malicious, which is impossible for Google to determine without involving the customer.

By StilesCrisis 2026-02-2612:47 If there's one thing Google is good at, it's logging.

By ddalex 2026-02-269:19 I think Google has a database with everything. EVERYTHING.

Ohh so that's how that happened. I had noticed (purely for research purposes of course) that some of Google's own keys hardcoded into older Android images were useable for Gemini (some instantly ratelimited so presumably used by many other people already but some still usable) until they all got disabled as leaked like two months ago. They also had over time disabled Gemini API access on some of them over them beforehand.

By addandsubtract 2026-02-2612:41 I also noticed lots of Github projects expose their gemini key and was confused. This explains a lot.