About 3 months ago I started working with RISC-V port of Fedora Linux. Many things happened during that time.

Triaging

I went through the Fedora RISC-V tracker entries, triaged most of them (at the moment 17 entries left in NEW) and tried to handle whatever possible.

Fedora packaging

My usual way of working involves fetching sources of a Fedora package (fedpkg

clone -a) and then building it (fedpkg mockbuild -r fedora-43-riscv64). After

some time, I check did it built and if not then I go through build logs to find

out why.

Effect? At the moment, 86 pull requests sent for Fedora packages. From heavy packages like the “llvm15” to simple ones like the “iyfct” (some simple game). At the moment most of them were merged, and most of these got built for the Fedora 43. Then we can build them as well as we follow ‘f43-updates’ tag on the Fedora koji.

Slowness

Work on packages brings the hard, sometimes controversial, topic: speed. Or rather lack of it.

You see, the RISC-V hardware at the moment is slow. Which results in terrible build times — look at details of the binutils 2.45.1-4.fc43 package:

| Architecture | Cores | Memory | Build time |

|---|---|---|---|

| aarch64 | 12 | 46 GB | 36 minutes |

| i686 | 8 | 29 GB | 25 minutes |

| ppc64le | 10 | 37 GB | 46 minutes |

| riscv64 | 8 | 16 GB | 143 minutes |

| s390x | 3 | 45 GB | 37 minutes |

| x86_64 | 8 | 29 GB | 29 minutes |

Also worth mentioning is that the current build of RISC-V Fedora port is done with disabled LTO. To cut on memory usage and build times.

RISC-V builders have four or eight cores with 8, 16 or 32 GB of RAM (depending on a board). And those cores are usually compared to Arm Cortex-A55 ones. The lowest cpu cores in today’s Arm chips.

The UltraRISC UR-DP1000 SoC, present on the Milk-V Titan motherboard should improve situation a bit (and can have 64 GB ram). Similar with SpacemiT K3-based systems (but only 32 GB ram). Both will be an improvement, but not the final solution.

We need hardware capable of building above “binutils” package below one hour. With LTO enabled system-wide etc. And it needs to be rackable and manageable like any other boring server. Without it, we can not even plan for the RISC-V 64-bit architecture to became one of official, primary architectures in Fedora Linux.

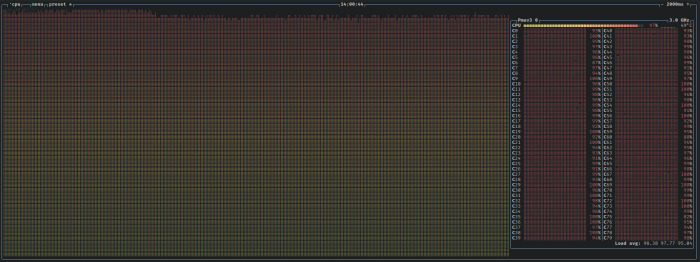

I still use QEMU

Such long build times make my use of QEMU useful. You see, with 80 emulated cores, I can build the “llvm15” package in about 4 hours. Compare that to 10.5 hours on a Banana Pi BPI-F3 builder (it may be quicker on a P550 one).

And LLVM packages make real use of both available cores and memory. I am wondering how fast would it go on 192/384 cores of Ampere One-based system.

Future plans

We plan to start building Fedora Linux 44. If things go well, we will use the same kernel image on all of our builders (the current ones use a mix of kernel versions). LTO will still be disabled.

When it comes to lack of speed… There are plans to bring new, faster builders. And probably assign some heavier packages to them.