These are only a few things I've built with Claude Code since using it. Most of them are experimental and I've read some reports of it not doing as well on massive real-world code bases, but from what I've seen I'd be surprised it wasn't still useful in those contexts given enough guidance. I'm still surprised by how much better it is as a tool when given a lot of context and input. Here are a few other toy projects I've had it spit out - all things that I've wanted to build for months or years but never found the time. Now you can do stuff like this in a few minutes or hours instead of days or weeks.

Building a HackerNews comment ranker plugin

I've often been annoyed by comments on HackerNews that are not at all about the article they're commenting on. "Bitcoin adopts a new FlibbityGippity Protocol and can now handle 2.3 transactions per day" and someone will comment that all Crypto projects are scams or something. Note that I don't care about the quality of the comment, or whether or not I agree with it, but I'd wanted a visual way to skip over the 'noise' comments that aren't actually about the article at all.

I tried to build this before but got distracted by more important stuff, so I figured I'd start over with Claude Code.

It took a few tries before it could actually display the badges correctly within HN's (pretty simple) HTML structure, but after a few rounds of 'no try again' or 'add more debugging so I can paste the errors to you', it created almost exactly what I had envisioned.

I was surprised by how good it looked (much better than my normal hacky frontends), and the details it had added unprompted (like the really nice settings page, even with a nod to the HN orange theme).

The actual ranking (which I'm using OpenAI for, not Anthropic) is not that good. It could probably be improved with a better prompt and some more examples of what I think is a '1' comment or a '5' comment, but it works and looks at least directionally accurate so far.

Building Poster Maker - A Minimal Canva Replacement

AI is getting good at graphic design, and I knew people who were using it to generate basic posters. They liked that the AI could choose good background images, and generally make things look nice with well-sized fonts etc, but they were frustrated that the AI was still only 80% good at generating images of text, and often had spelling errors or other artifacts.

I was going to tell them to use Canva or Slides.new or another alternative. I tried them out so I could do a quick tutorial on how to use them and realised they were all kinda bad. Either enshittified to death, or lacking the basic AI features, or too complicated for non-technical people to use.

LFG!

This was the project that felt a bit more like engineering and less like vibe coding than the others. I knew what I wanted: a really simple interface to combine images and text and get an A4 PDF out. I'd tried to build something like this before and looked at different PDF creation libraries, HTML→PDF flows, and seen that it's not the easiest problem to solve.

Last time I solved a similar problem (in 2018) I ended up hacking in Google Docs to create A4 PDFs, but that was more of a templating problem and Google Docs isn't great for layout stuff.

So I built posters.dwyer.co.za - it lets you generate the background image with AI (I used Claude Code to build everything, but I told it to use GPT for image generation as that's what I'd used before and I think it's better? I don't even know if Anthropic has image generation APIs to be honest and it seemed easier to just use what I knew).

This project took a few hours of back and forth. I was really impressed with some of Claude's UI knowledge (it one shotted the font selection when I told it what I wanted) and also saw the limitations in other aspects (it kept overlaying elements in a very un-userfriendly way, sidebar would hide and show and move everything around, it clearly has no idea what it's like to be a human and use something like this).

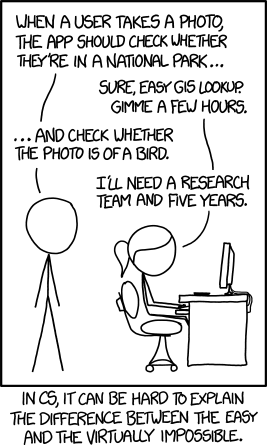

But after telling it exactly where to put elements and what they should do, I got more or less exactly what I had envisioned. I was surprised at how well the PDF export worked after the 6th or 7th attempt of blank files or cut off files - now it seems really great at giving me a PDF that is exactly like the preview version which for anyone not in tech seems like a really basic piece of functionality and anyone who has actually tried to do it before knows is like the XKCD bird problem:

Doing admin with Claude Code

This isn't really a project I built, but I'm using Claude Code more and more to do non-coding related tasks. I needed to upload bank statements for my accountant, but my (shitty) South African banks don't name the files well. I can download each month from the web app, but it calls them all "Unknown (5)" or whatever with no extension so it's a pain to go and name them correctly.

I asked Claude to rename all the files and I could go do something else while it churned away, reading the files and figuring out the correct names.

I then took it a step further and told it to merge them all into a single CSV file (which also involved extracting random header tabs off the badly formatted XLSX files that my bank provides), and classifying all expenses into broad and specific categories. I told it a few things like the roles of specific people in the team and I think it one-shotted that too. I'm not going to fire my bookkeepers yet, but if I were a bookkeeper I'd definitely make sure to be upskilling with AI tooling right now.

Using Claude Code as my Text Editor

I'm a die-hard vanilla vim user for all writing, coding, configuration and anything else that fits. I've tried nearly every IDE and text editor out there, and I was certainly happy to have a real IDE when I was pushing production Java for AWS, but vim is what I've always come back to.

Switching to Claude Code has opened a lot of new design possibilities. Before (did I mention I suck at front end coding), I was restricted to whatever output was produced by static site generators or pandoc templates. Now I can just tell Claude to write an article (like the one you're currently reading) and give it some pointers regarding how I want it to look, and it can generate any custom HTML and CSS and JavaScript I want on the fly.

I wrote this entire article in the Claude Code interactive window. The TUI flash (which I've read is a problem with the underlying library that's hard to fix) is really annoying, but it's a really nice writing flow to type stream of consciousness stuff into an editor, mixing text I want in the article, and instructions to Claude, and having it fix up the typos, do the formatting, and build the UX on the fly.

Nearly every word, choice of phrase, and the overall structure is still manually written by me, a human. I'm still on the fence about whether I'm just stuck in the old way by preferring to hand-craft my words, or if models are generally not good at writing.

When I read answers to questions I've asked LLMs, or the long research-style reports they create, the writing style is pretty good and I've probably read more LLM-generated words than human-generated words in the last few months.

But whenever I try to get them to produce the output I want to produce, they fail hard unless I spend as much effort on the prompt as I would have on writing the output myself.

Simon Willison calls them 'word calculators' and this is still mainly how I think of them. Great at moving content around (if you want a summary of this now very long article, an LLM will probably do a great job) but pretty useless at generating new stuff.

Maybe us writers will be around for a while still - let's see, and lfg.